You know the feeling. You’ve spent months building a revolutionary AI tool. You finally get to the demo stage, and it is absolutely flawless. The prompts land perfectly, the system churns out highly accurate results in a matter of seconds, and everyone in the room believes they are looking at the next unicorn startup.

But then, reality hits.

You push the tool to production, and suddenly, everything grinds to a halt. Users are churning, the system is hallucinating, and you are sitting at your desk wondering if it makes sense to continue working on it at all. If you are an AI startup founder, a developer, or a business owner dealing with a failing AI deployment in early 2026, take a deep breath.

You are not alone, and more importantly, your core technology probably isn't the problem.

Recent industry analyses, including a 2026 report from The Hacker News, reveal a harsh truth about the modern artificial intelligence landscape: most AI initiatives do not fail because of bad code. They stall because the polished perfection of a demo simply cannot survive contact with real-world operations.

Let’s break down exactly why this happens, and look at how you can pivot your AI tool away from failure and toward sustainable, scalable success.

The “Honeymoon Phase”: Why AI Demos Lie to Us

The fastest way to fall in love with an AI product is to watch a controlled demonstration. But you have to remember that demos are essentially magic tricks. They are carefully constructed to highlight the highest potential of your software while completely hiding the friction.

In a demo environment, you are dealing with a perfect world. The data is clean and beautifully organized. The inputs are predictable. The use cases are highly specific and well-understood by the system.

But when your AI tool is released into the wild, it is immediately thrown into chaos. Production environments do not look like demos. In the real world, users make typos, data is a mess, and systems do not always want to communicate with one another. This sudden transition from a controlled lab to a chaotic reality is exactly where the initial burst of enthusiasm dies.

What Actually Breaks When Your AI Hits the Real World

To fix your AI startup, you first have to understand what is actually breaking under the hood once the demo ends. Here are the four hidden traps that derail most AI deployments.

The Illusion of Clean Data

In a lab setting, your AI model is fed high-quality, structured information. However, in enterprise and business environments, data is often spread across dozens of different tools in entirely different formats. Your model might be brilliant, but if it is fed noisy, incomplete, or disorganized data by the end-user, it is going to struggle to provide a coherent answer.

The Hidden Trap of Latency (Slow Response Times)

Latency simply means the time it takes for your AI to respond to a user's request. In isolation, your model might feel lightning-fast. But when you embed that same AI into a multi-step workflow running at a massive scale, it can introduce frustrating delays. In 2026, users have zero patience. If your AI makes them wait, they will simply go back to doing the task manually.

The Nightmare of Edge Cases

An “edge case” is an unusual scenario or an unpredictable user behavior that you didn't plan for. In a demo, you only show the “happy path,” the ideal scenario. But the real world is made entirely of exceptions. If your AI tool handles the top 80% of common questions perfectly but completely crashes on the remaining 20% of unusual requests, users will quickly lose trust in it.

The Integration Wall

Your AI tool could be the smartest platform on the planet, but if it operates in a silo, its impact is limited. Operational work requires coordinating across multiple systems like CRMs, email clients, and project management dashboards. If your tool cannot deeply integrate into the workflows your customers already use, it becomes just another annoying tab they have to keep open.

The Silent Killer: Governance and Red Tape

Beyond the technical headaches, there is a massive business hurdle that causes AI startups to fail: governance.

Governance is just a corporate word for the rules, policies, and privacy controls a company puts in place to protect itself. With generative AI now everywhere in 2026, organizations are terrified of data privacy leaks, compliance violations, and misuse.

Many founders discover that while it is incredibly easy to build an AI tool, it is incredibly difficult to prove to a major corporation that your tool is safe to use. Without clear security guardrails, your promising startup will get endlessly stuck in legal review cycles and will never scale.

Your Step-By-Step Guide on How Not to Fail

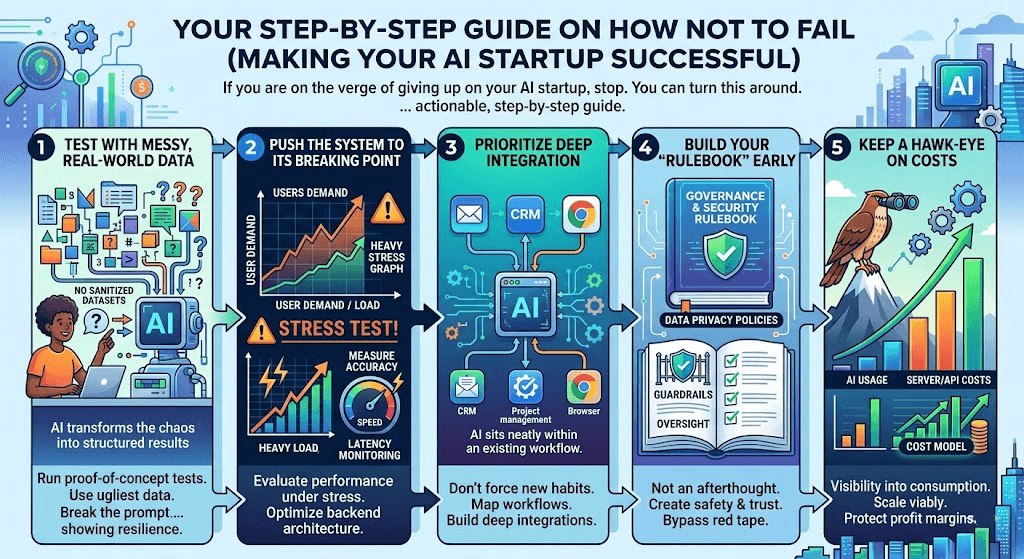

If you are on the verge of giving up on your AI startup, stop. You can turn this around. Successful founders who move beyond the demo phase and build profitable AI companies share a few distinct habits.

Here is your actionable, step-by-step guide to making your AI startup successful.

Step 1: Test with Messy, Real-World Data

Stop testing your tool on sanitized datasets. Before you commit to a final build, run proof-of-concept tests using the ugliest, most disorganized data you can find. Ask your beta testers to try and “break” the prompt. If you build your AI to survive messy inputs, it will thrive in production.

Step 2: Push the System to its Breaking Point

You need to evaluate how your AI performs under heavy stress. Measure its accuracy when multiple users are hitting it at once. Monitor the latency (response time) closely. If the system slows down under a heavy load, you need to optimize your backend architecture before you start selling to enterprise clients.

Step 3: Prioritize Deep Integration

Stop trying to force users to change their habits to use your software. Instead, map out exactly how your target audience works. What software do they use every day? Build deep, seamless integrations into those existing platforms. Your AI should feel like a natural extension of their current workflow, not an interruption.

Step 4: Build Your “Rulebook” Early

Do not treat security and governance as an afterthought. From day one, build clear data privacy policies, user guardrails, and oversight mechanisms into your software. When you pitch your product, lead with how safe and compliant it is. This will instantly build trust with corporate buyers and help you bypass legal red tape.

Step 5: Keep a Hawk-Eye on Costs

AI usage can scale incredibly fast, and so can your server and API costs. If you do not have clear visibility into your computational consumption, your profit margins will vanish overnight. Build a clear cost model to ensure that even at a massive scale, your startup remains financially viable.

Conclusion: Turning Potential into Production

Building an AI startup in 2026 is not for the faint of heart. It is entirely normal to hit a wall and feel like your deployment is going to fail. But remember this: AI has the genuine potential to change how businesses operate.

Success no longer depends on having the flashiest demo or the most sophisticated underlying model. True success comes down to how well your tool fits into real, messy workflows, how smoothly it integrates with existing systems, and how safely it operates within corporate security frameworks.

By stepping away from the illusion of the perfect demo and embracing the friction of the real world, you can transform your struggling AI tool into a lasting, impactful, and wildly successful business. Do not quit just yet, your hardest challenges are simply a roadmap to your best product. Check out our Blog for such helpful guide and more resources on how to prevent your data from stealing.