You described an app in a chat window. The AI wrote the code. You clicked deploy. It works. Users are signing up. Revenue is happening.

Here is the part nobody mentioned during that build session: the AI that wrote your code optimized for one thing. Making it run. Not making it safe.

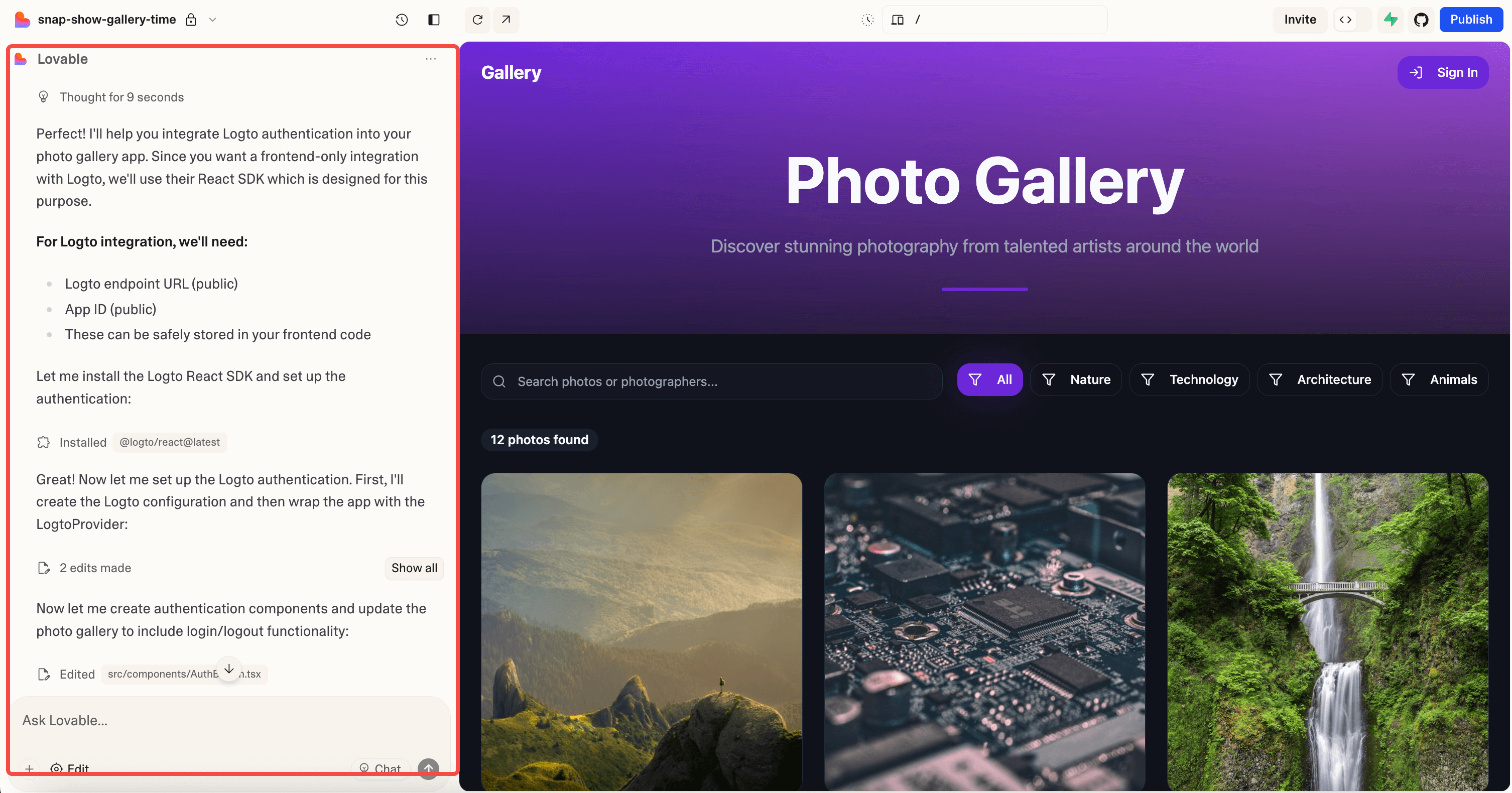

Vibe coding, building applications through AI chat interfaces like Cursor, Lovable, or Bolt.new without writing code yourself, is now how a significant chunk of new software gets made. The speed is real. So are the security holes.

The Specific Problems AI Code Carries

AI-generated code has patterns, and not all of them are good. Research on codebases built with LLM tools shows consistent categories of failure.

SQL injection. AI code generators frequently build database queries by concatenating user input directly into SQL strings. The generated code works perfectly in testing. It also lets anyone with a browser's developer tools rewrite your database. Studies of AI-assisted projects found SQL injection present in roughly one out of three codebases.

Hardcoded secrets. When you tell an AI "connect to my database," it often places the connection string, complete with credentials, directly in the source file. Those credentials end up in version control, in deployment logs, and eventually on the public internet.

Broken authentication. AI can scaffold a login page and a session system in seconds. But the session tokens it generates may lack expiration. The password reset flow might not verify identity. The admin route might check a role on the frontend but skip the check on the API. The auth works. The auth is also full of gaps.

No input validation. AI tools rarely add server-side validation unless you specifically ask for it. File uploads accept anything. Form fields pass directly to the backend. API endpoints trust whatever the client sends. The AI built what you described. You didn't describe the defenses.

Missing access controls. This one is the quietest and the most dangerous. Your app has user A and user B. Can user A access user B's data by changing an ID in the URL? AI-generated code almost never checks. The feature works for the happy path. The abuse path is wide open.

Why This Is Worse Than It Sounds

Traditional developers have muscle memory around these issues. Years of code review, security training, and getting burned by production incidents build instincts. You learn to parameterize queries because you've seen what happens when you don't.

Vibe coders don't have that muscle memory. That's not a criticism. It's a structural gap. When you can't read the code your tool produced, you can't spot the patterns that experienced developers catch on sight. The AI won't flag its own mistakes. It generated working code. Mission accomplished, as far as it's concerned.

This creates a specific blind spot: applications that function correctly for every normal user but collapse under any intentional pressure. A motivated attacker doesn't use your app the way your customers do. They probe the edges. And AI-generated code has a lot of edges.

The scale matters too. Vibe-coded applications aren't hobby projects anymore. They handle payments, store personal data, manage business operations. The stakes match any traditionally-built SaaS, but the security posture doesn't.

How to Actually Protect What You Built

You don't need to become a security expert. You need to add the right checks before you ship.

- Assume every endpoint is vulnerable until tested. This is the mental shift. The default state of AI-generated code is "unverified." Treat it that way. Every API route, every form handler, every file upload needs to be probed by something that thinks like an attacker.

- Run automated security scanning against your live application. Not your code. Your running app. A DAST scanner that can handle authentication and modern JavaScript frameworks will find the SQL injections, the broken access controls, and the exposed admin routes that your AI tool created without telling you. Axeploit does this with zero configuration. Submit your URL, and AI agents test your application across 7,500+ checks the way an attacker would. No security expertise required.

- Check your dependencies. Your AI pulled in packages to make things work. Some of those packages have known vulnerabilities. Tools like Snyk scan your dependency tree and tell you exactly what needs updating.

- Scan for leaked secrets. If your AI put a database password in your source code and you pushed it to GitHub, bots will find it before you do. GitGuardian catches these in real time.

- Add security to your shipping loop, not after it. The mistake is treating security as a launch task. It's a shipping task. Every time you deploy, the scan should run. Axeploit's continuous monitoring plans exist for this reason: your app changes every week, and so does your attack surface.

The Bottom Line

Vibe coding is not going away. It shouldn't. Building software by describing what you want is a genuine leap forward.

But the security gap is real and predictable. AI code generators produce the same categories of vulnerabilities over and over. You know exactly where to look. The tooling to check is faster and cheaper than it has ever been.

Don't ship and hope. Ship and verify.

Start your first security scan: https://panel.axeploit.com/signup