If you are a CTO, a DevSecOps Engineer, or a developer navigating the software landscape in early 2026, you already know that the way we build applications has fundamentally transformed. We have officially left the era of manual boilerplate behind and entered the age of the “vibe coder.”

Today, you don’t spend hours debugging syntax errors or digging through documentation. You provide a prompt, you orchestrate the architecture, you “vibe” with your AI assistant, and complex, functional microservices are spun up in minutes. The sheer velocity of AI code generation has enabled startups and enterprise teams to ship features at a pace that was unimaginable just a few years ago.

But this unprecedented speed carries a hidden, often disastrous cost. When an AI agent rapidly writes thousands of lines of your backend logic, a critical question emerges in the boardroom: When the AI writes a critical bug, who is responsible for the fallout?

Welcome to the harsh reality of liability in AI code. Let’s explore why blindly trusting AI-generated outputs is a massive risk, how attackers are actively exploiting predictable machine-written patterns, and why modern automated scanning is the only way to protect your infrastructure without killing your development speed.

The Illusion of Perfection and Vibe Coding Security

To understand the threat, we have to look at why developers trust AI so implicitly. Tools like GitHub Copilot, Cursor, and emerging AI agents are incredibly sophisticated. When they generate code, they don’t just output raw logic; they output beautifully formatted, perfectly indented code complete with helpful comments and type hints.

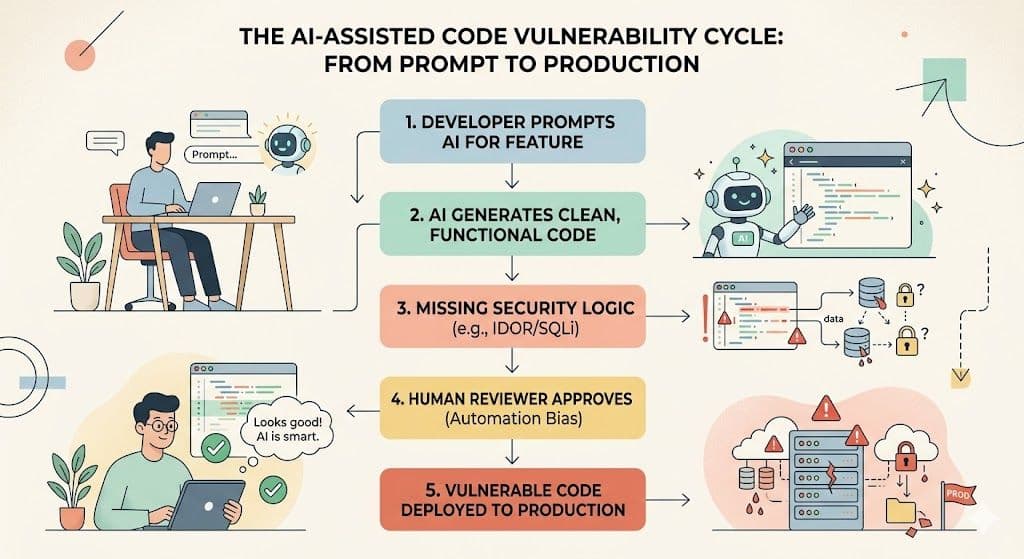

Because the code looks clean and compiles flawlessly on the first try, a psychological phenomenon known as “automation bias” sets in. Developers naturally assume that because the machine got the complex syntax right, it must have also gotten the security architecture right.

Unfortunately, Large Language Models (LLMs) are essentially advanced prediction engines. They are designed to predict the next most logical line of text based on their training data. They are not security engineers. Consequently, they frequently prioritize functional completion over vibe coding security, leading to severe blind spots.

Hallucinating IDOR and SQL Injections

The most dangerous Copilot vulnerabilities aren't obvious syntax errors; they are logic flaws that quietly bypass traditional security reviews.

Take IDOR (Insecure Direct Object Reference), for example. If you prompt an AI to “write an endpoint to fetch a user's invoice,” the AI will likely write a perfectly functional database query that retrieves an invoice based on an ID number in the URL (e.g., api/invoices/1042).

What the AI often forgets to include is the authorization check. It fails to verify if the user actually owns invoice 1042. Because the code compiles and fetches the invoice during local testing, the developer pushes it to production. Suddenly, a malicious actor can simply change the URL to 1043 and download another customer's highly sensitive financial data.

Similarly, AI models are prone to hallucinating classic flaws like SQL Injection (SQLi). If an AI isn't explicitly prompted to sanitize a user's input, it might pass raw user data directly into a backend database query. The code will look pristine to a human reviewer rushing to approve a pull request, but it essentially hands the keys to your database to anyone with a web browser.

Liability in AI Code: Who Owns the Breach?

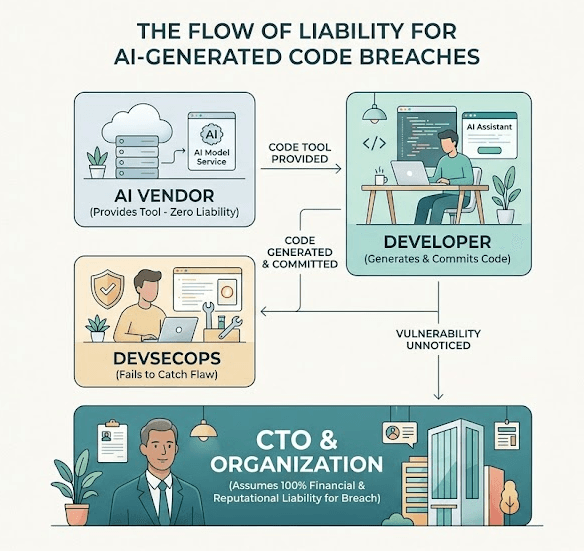

For a CTO, the “vibe coding” era introduces a massive legal and ethical headache. When a human developer writes a bug, the chain of accountability is clear. But when an AI hallucinates an insecure data pipeline, who takes the blame?

The hard truth of 2026 is that liability in AI code falls entirely on the organization deploying it. Microsoft, OpenAI, and Anthropic are not going to pay your GDPR compliance fines, nor will they apologize to your customers when a data breach hits the news. The AI is a tool, not a scapegoat.

Hackers are acutely aware of this dynamic. Cybercriminal syndicates now use automated bots to scan the internet specifically for predictable patterns known to be generated by AI code assistants. They know that developers are shipping faster than they can manually review, creating a massive attack surface of easily exploitable logic flaws.

Securing the Vibe: DevSecOps to the Rescue

So, do you rip the AI tools out of your developers' hands and go back to slow, manual coding? Absolutely not. In today's market, slowing down means getting left behind.

Instead, you need to evolve your security posture to match your development speed. This means fully embracing DevSecOps, the practice of baking security seamlessly into the automated CI/CD pipeline rather than treating it as a final, panicked roadblock.

1. Upgrade Your SAST

Your first line of defense is SAST (Static Application Security Testing). Modern SAST tools have evolved to read code much like an AI does, but with a purely adversarial mindset. By integrating SAST directly into your pull requests, you can automatically flag hardcoded secrets, missing authorization checks, and known insecure coding patterns before a human reviewer even looks at the code.

2. Active Defense with Axeploit

However, static analysis is no longer enough. Because AI-generated flaws like IDOR are rooted in business logic rather than syntax, you need a tool that tests how your application behaves in the real world.

This is where Axeploit becomes your ultimate safety net. While your developers vibe code at maximum velocity, Axeploit’s automated vulnerability scanner actively attacks your live, running applications from the outside, mimicking the exact behaviors of a malicious hacker.

If your AI accidentally hallucinates an endpoint that permits an IDOR attack, or if an overly helpful code assistant leaves a REST API exposed, Axeploit’s dynamic scanner will catch it, flag the exact exploit path, and show your team precisely how to patch it before it reaches production.

Conclusion: Code with AI, Secure with Confidence

The transition to AI-assisted coding is one of the greatest leaps in software engineering history. But as the barrier to creating complex applications drops to near zero, the responsibility of securing those applications remains entirely human.

You cannot outsource your security architecture to a language model. By adopting a DevSecOps mindset, acknowledging the realities of AI liability, and arming your team with active vulnerability scanners like Axeploit, you can safely harness the raw speed of AI without sacrificing your company's reputation.