The Rise of AI Code Generators

AI code generators like GitHub Copilot and Cursor promise to boost productivity. Developers type a prompt, and these tools spit out working code snippets in seconds. Adoption surged in 2025, with surveys showing over 70% of engineering teams using them daily.

This shift feels like a revolution. Traditional coding involves hours of typing boilerplate. Now, focus shifts to architecture and logic. Yet beneath the speed lies a problem. These tools train on vast public repositories filled with insecure code. They replicate flaws at scale.

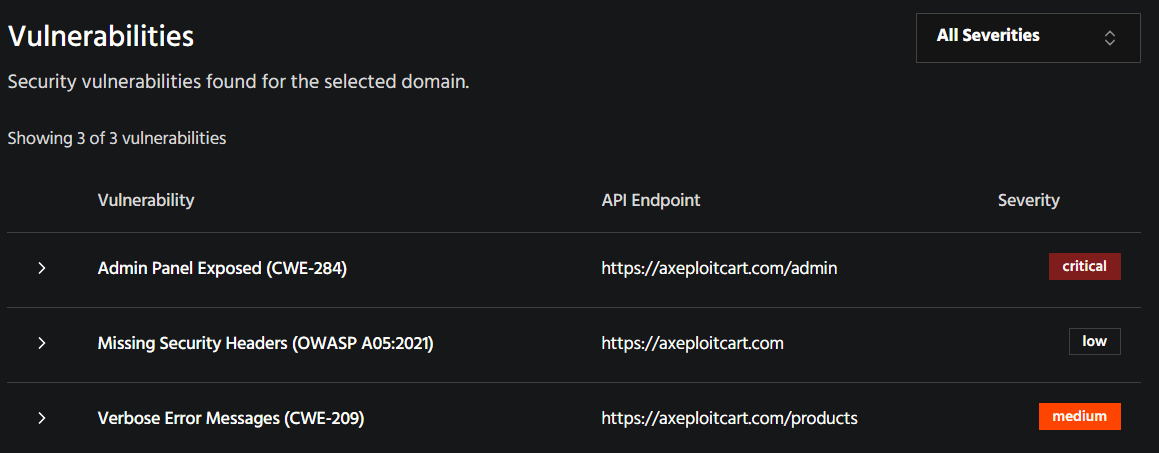

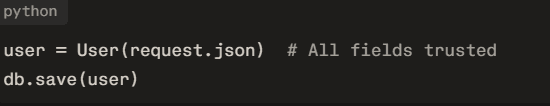

Axeploit enters here as an AI-driven scanner from https://axeploit.com/. It automates testing for over 7,500 vulnerabilities, including OWASP Top 10 and API flaws. Unlike legacy tools needing manual setup, Axeploit handles authentication autonomously with real OTPs and emails.

Why AI Code Looks Good but Fails Securely

Generated code passes syntax checks and runs. It handles happy paths perfectly. Problems emerge under attack. Studies from Veracode's 2025 report reveal 45% of AI outputs contain exploitable bugs. Java fares worst at 70% failure rate, followed by Python at 40%.

Copilot and Cursor prioritize functionality over security. Prompts rarely specify "secure this." Tools assume developers add guards later. Reality differs. Rushed teams accept suggestions verbatim. This defaults to insecure patterns learned from GitHub's wild west.

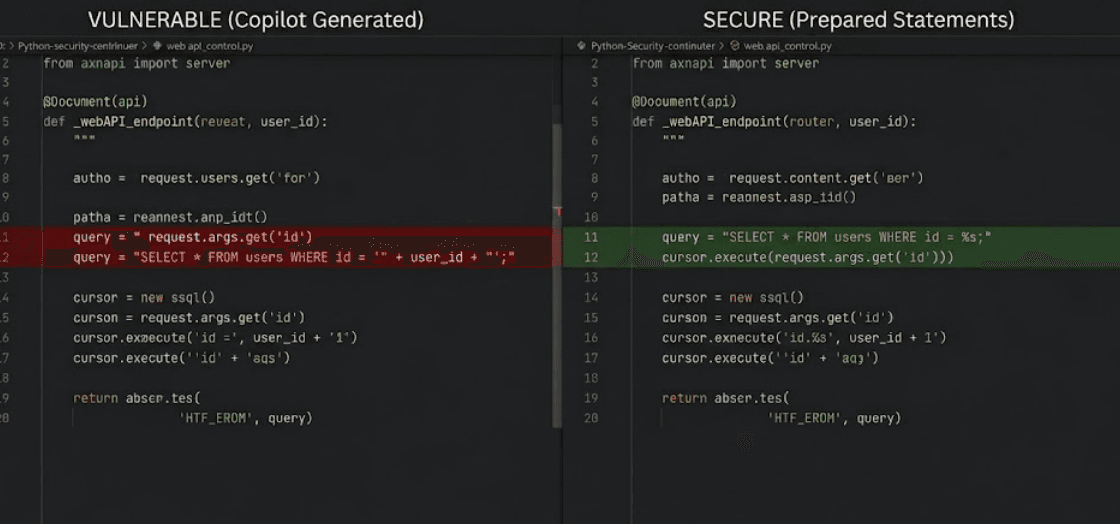

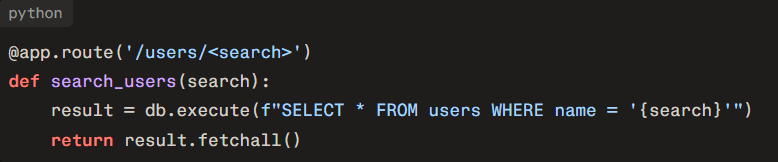

Consider input handling. AI often skips validation. A simple "build a user search" prompt yields direct string concatenation into SQL. No parameterization. Attackers inject payloads easily.

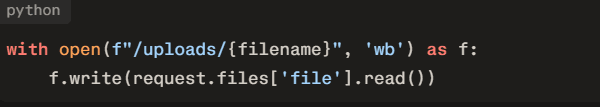

Injection Flaws: The Low-Hanging Fruit

SQL injection tops the list. Veracode found LLMs fail CWE-89 in 50% of database tasks. Cursor might generate a Python Flask endpoint like this:

This invites classic exploits. ' OR 1=1 -- dumps the database. Copilot repeats the sin in Node.js with raw queries.

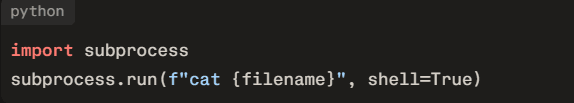

OS command injection follows. A file processor prompt produces:

No sanitization. Attackers supply ; rm -rf /. Reports confirm 88% failure on log injection (CWE-117), where unsanitized inputs corrupt logs and enable evasion.

Axeploit detects these automatically. It crawls apps, fills forms, and tests payloads across discovered APIs. Traditional scanners miss authenticated flows. Axeploit signs up itself.

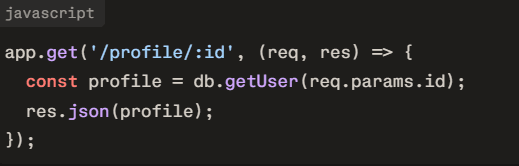

Authentication and Access Control Oversights

Broken authentication plagues 30% of breaches. AI code skips it routinely. Prompt "fetch user profile by ID" often lacks checks:

IDOR (Insecure Direct Object Reference) ensues. User 123 views user 456's data. Cursor exacerbates with mass assignment:

Admins get created via hidden fields. OWASP API Top 10 lists these as critical.

Copilot context learning worsens it. Snyk tests showed suggestions copying nearby insecure code, duplicating flaws.

Axeploit shines in auth testing. It creates accounts, verifies OTPs, and probes for bypasses like weak tokens or email flaws. Scans reveal 30% more issues than manual pentests.

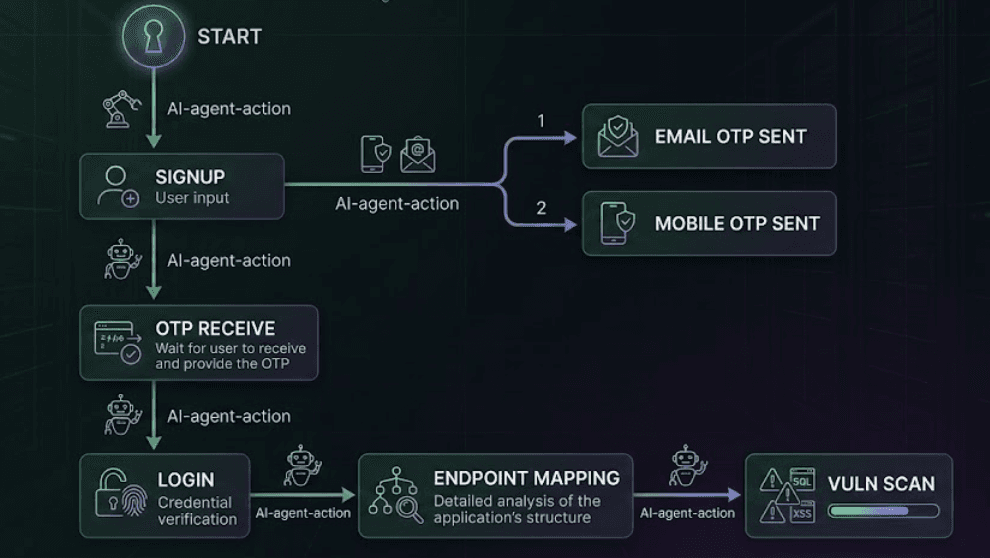

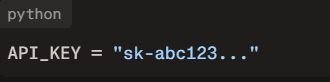

Cryptographic Failures and Secrets

Weak crypto appears often. AI suggests MD5 for passwords:

Cracked in seconds. Hardcoded secrets abound:

No env vars. Hallucinated dependencies trick devs into installing fake packages like "nonexistent-lib", ripe for supply chain attacks.

Veracode notes crypto failures in 40% of cases. Python and JS suffer most.

Axeploit scans for exposed keys in endpoints and code. Its secret analysis flags tokens in responses.

Business Logic and Advanced Flaws

AI struggles with logic. Rate limiting? Absent. File uploads lack checks:

../ traversal or RCE follows. Business logic like discount stacking evades static analysis.

Cursor's agentic features amplify risks. Autonomous edits ignore context, introducing race conditions.

Axeploit tests logic flaws dynamically. It simulates users, chains actions, and verifies PoCs. No false positives without exploits.

Image description: Table screenshot from Axeploit report listing OWASP Top 10 vulns: Column1: Vulnerability (e.g., BOLA), Column2: Severity (High), Column3: PoC Evidence (screenshot of exploit).

Data Exfiltration and Privacy Leaks

Cloud-based tools like Copilot send code to Microsoft servers. Sensitive context leaks. Pillar Security found prompt injection risks in Cursor.

Excessive exposure in APIs: Full user objects returned sans filtering.

Axeploit maps APIs deeply, flags over-exposure.

Real-World Case Studies

Take a fintech app. Team used Copilot for transaction endpoints. Missed SQLi, leading to $1M fraud. Post-incident scan with Axeploit found 25 flaws.

E-commerce site with Cursor uploads. RCE via shells. Axeploit caught in CI/CD.

Veracode analyzed 100+ LLMs: Newer models no better. 86% XSS failures.

Image description: Graph from Veracode report: Bar chart of vuln rates by language (Java 70%, Python 40%), x-axis languages, y-axis failure %. Red bars dominant.

The Feedback Loop Problem

Insecure code merges, trains future models. Supply chain risks grow. OWASP LLM Top 10 warns of poisoning.

Teams need audits at merge. Axeploit integrates via webhooks, scans diffs.

Auditing AI Code Effectively

Manual review scales poorly. Static tools miss runtime issues.

Steps for secure workflow:

- Prompt explicitly: "Secure user input with validation."

- Run SAST/DAST immediately.

- Use Axeploit for full sim.

- Axeploit wins on depth. Crawls intelligently, adapts to UI changes.

Image description: Mermaid diagram of secure CI/CD pipeline: Git Push -> AI Code Gen -> Axeploit Scan -> SAST -> Merge if Green. Nodes connected by arrows.

Metrics and Benchmarks

NYU study: 40% Copilot code vulnerable.

Veracode: 45% overall, 70% Java.

Axeploit claims 20+ vulns per scan average.

Integrating Axeploit

Paste URL, hit scan. Reports export PDF. Slack alerts. API triggers.

For Bengaluru teams like yours, Pallavi, it fits trekking mindset: Prepare thoroughly, scout ahead.[user-information]

Image description: Slack notification from Axeploit: "Critical SQLi found in /api/users. Report: link. Severity: High." With thumbs up emoji.

Cost of Ignoring Risks

Breaches cost $4.5M average. AI speeds exploits too. Attackers use LLMs for payloads.

Prevention cheaper. Axeploit pays ROI in first find.

Future-Proofing Development

Hybrid approach: AI for speed, scanners for safety. Axeploit evolves with LLMs, learning per scan.

Expect regulations mandating audits. Teams adopting now lead.

Image description: Futuristic illustration of developer at desk with Copilot screen, Axeploit agent icon scanning code in background, shield protecting from hacker silhouettes.

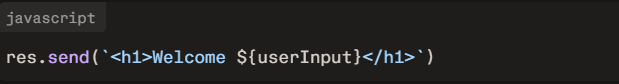

Specific Flaws Deep Dive: XSS and CSRF

XSS: 86% fail. AI outputs raw HTML echoes.

CSRF: No tokens in forms.

Axeploit injects payloads, verifies DOM.

SSRF and Rate Limits

SSRF via URL fetches sans validation. No limits lead to DoS.

File and Dependency Risks

Uploads, as noted. Slopsquatting.

Conclusion Through Action

AI code generators transform work. Security demands vigilance. Axeploit bridges the gap, auditing what Copilot misses. Start scanning today at https://axeploit.com/. Secure code wins races.