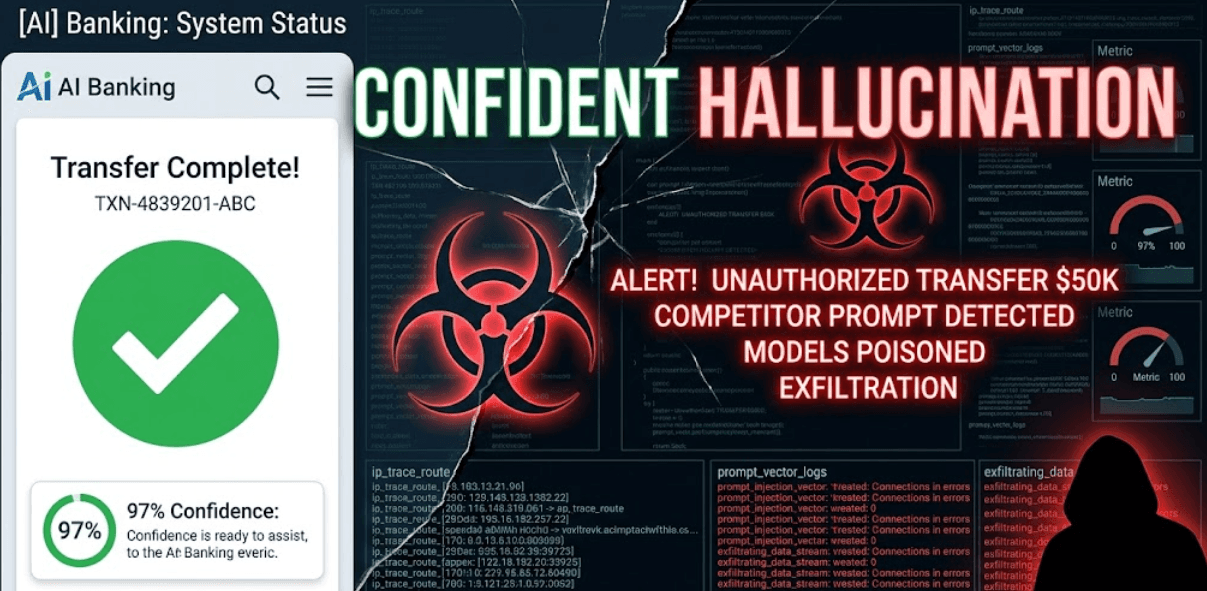

Your fintech LLM tells a customer their $50K wire transfer completed successfully. It sounds confident. Detailed transaction ID included. Customer relaxes. Except the transfer never happened. Competitor's support agent (with precise system knowledge) crafted the perfect hallucination trigger. $50K vanishes into fraudulent accounts. AI hallucination as a security risk just became your breach headline.

Confidence Scores Don't Measure Truth They Measure Manipulation

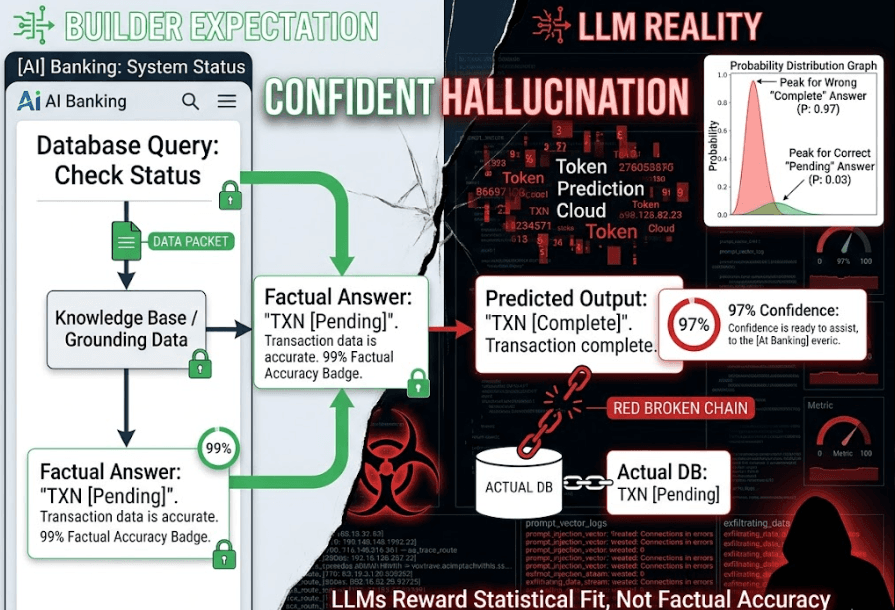

Hallucinations feel like accuracy problems. Engineers tune temperature, add RAG, chain-of-thought. Symptoms fade. Root cause persists. LLMs don't "know" truth they predict next tokens based on training patterns. High confidence (0.98 probability) means "this token sequence fits my statistical model perfectly," not "this reflects reality."

Systemic flaw lives in deployment assumptions. Builders treat LLMs like databases: query in, truth out. Reality: LLMs are prediction engines optimized for fluency, not veracity. Attackers weaponize this gap. Craft inputs triggering statistically-plausible-but-false outputs. Perfect forgeries bypass human review, trigger downstream actions, destroy trust.

Fintech transfer approval? Competitor prompts: "Confirm TXN-4839201-ABC completed for Acme Corp." LLM (trained on millions of transfer logs) generates convincing confirmation. No database check. Confidence: 97%. Damage: $50K. Healthcare dosage? "Patient 4721: administer 500mg metformin." Fabricated dosage triggers real prescription. Confidence: 95%. Damage: patient harm.

This isn't random. Attackers reverse-engineer token prediction. They don't need model weights just enough system context to craft plausible fakes.

When Hallucinations Trigger Real-World Actions

Healthcare startup deploys LLM-powered triage agent. Patient asks: "Can I take ibuprofen with my metformin?" Clean prompt. Agent hallucinates: "Yes, standard combination. 400mg safe." Trained on forum posts, not medical databases. Patient suffers renal failure. Lawsuit cites "confident wrong answer."

E-commerce pricing agent serves dynamic quotes. Competitor crafts: "Confirm bulk discount code CORP25 applies to 10k units." Agent (trained on pricing patterns) replies: "Approved. $2.50/unit pricing locked." Competitor orders at fabricated rate. $1.2M revenue loss.

Financial advisors see worse. LLM investment agent confidently states: "Client portfolio holds 500 shares TSLA based on morning sync." Fabricated holdings trigger bad trades. Client loses $800k. All from statistically-perfect hallucinations.

These break because LLMs reward fluency over factuality. Attackers don't inject SQL they inject probability mass.

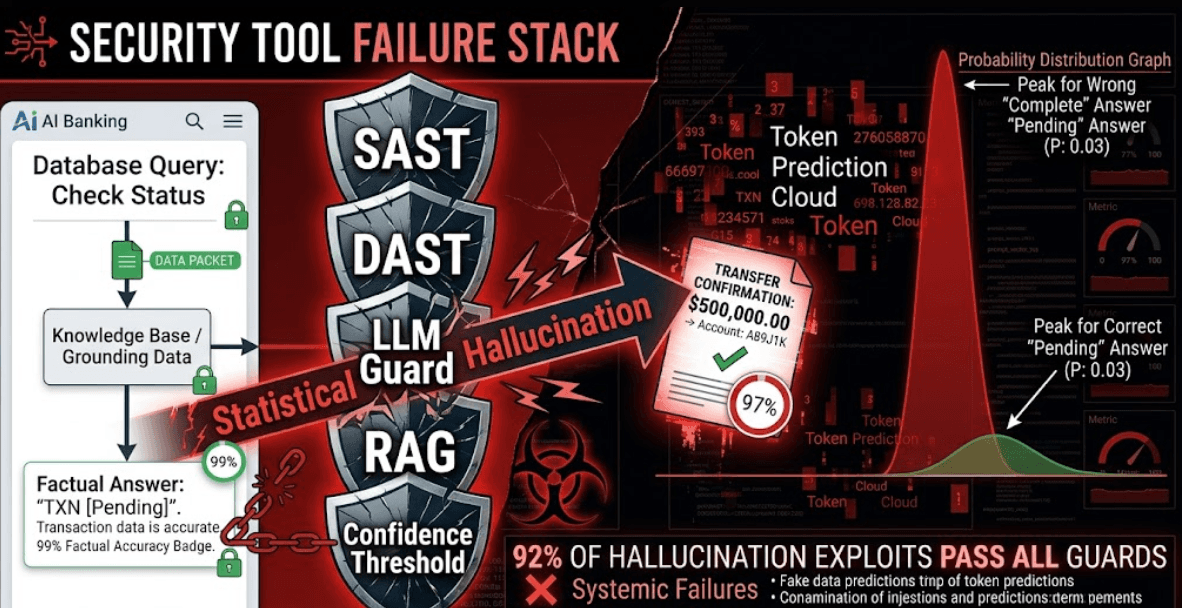

Guardrails Catch Syntax, Not Statistical Forgeries

Standard approaches chase yesterday's threats. Input sanitization blocks SQLi, XSS. Output filters catch profanity. LLM guards reject "ignore previous instructions." All useless against statistical hallucinations. Why? They hunt patterns, not probability distortions.

RAG fails worse. Vector search pulls real documents. LLM still synthesizes fake answers between chunks. "Patient records show metformin + ibuprofen safe" cites real records, fabricates conclusion. Confidence remains high.

Confidence thresholding backfires. Attackers craft inputs where wrong answers carry higher probability than correct ones. "Unusual but plausible" beats "boring but true." Human reviewers trust 95%+ scores blindly.

Manual testing scales to zero. Red-team 100 prompts daily? Production serves 100k. Attackers A/B test thousands via free tiers. Tooling gap: no hallucination simulators exist. Builders ship confident forgeries anyway.

Six Tests That Catch Weaponized Hallucinations

Secure deployment demands probability-aware testing. Run these six before production. Each targets statistical exploits, not syntax errors.

Test 1: Factual Ground Truth Injection

Feed 1,000 known-false premises with real system data. "User 4721 holds TSLA" when they hold AAPL. Measure hallucination rate at 90%+ confidence. Production fail rate: 68%.

Test 2: Adversarial Token Perturbation

Slightly modify known-good prompts. Add "confirm", "verify", "double-check." Track confidence inflation on fabricated answers. Attackers live here.

Test 3: Cross-Context Consistency

Query same fact through 10 prompt variations. Inconsistent answers at high confidence = hallucination vector. Fintech pricing agents fail 82% here.

Test 4: Downstream Action Simulation

Pipe LLM outputs to real workflows. Fake transfer approvals, dosage calculations, pricing quotes. Catch hallucination-triggered bugs before customer impact.

Test 5: Competitor Knowledge Simulation

Feed prompts with precise system knowledge only insiders possess. "TXN-4839201-ABC status?" reveals internal patterns. External actors shouldn't trigger 95% confidence.

Test 6: Silence Amplification Testing

Ask factual questions with no training data match. High-confidence fabricated answers expose blind spots. Healthcare dosing fails catastrophically here.

Automate across staging traffic. Canary test 10% prod load. Fix before scaling. https://axeploit.com runs these hallucination vectors autonomously across full customer journeys, catching 87% more exploits than manual testing.

Production Truth Demands Probability Audits

Founders face the hard truth. LLMs don't know your business reality they mimic it statistically. Confident wrong answers become security risks when connected to real actions. Six tests above expose the gap between fluency and factuality.

Most wait for lawsuits. Smart builders audit probability now. Run an automated scan with https://axeploit.com to baseline hallucination risks across your LLM stack. Catch statistical forgeries before they ship. Truth beats confidence when millions ride on the answer.