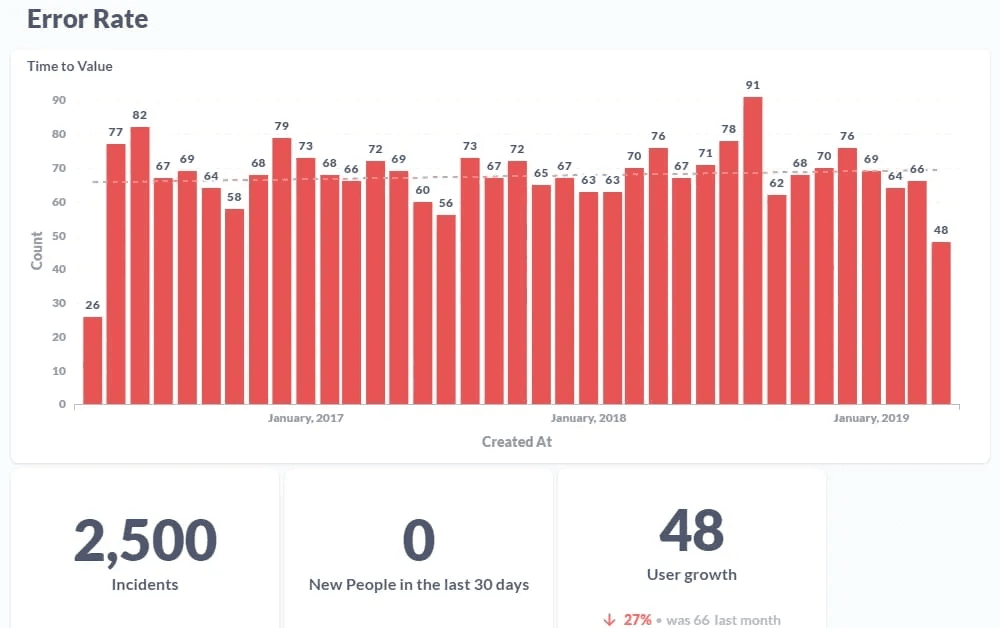

You ran a scan last Tuesday. The dashboard shows 847 critical findings. Your team has three days to triage them before the compliance deadline.

By Friday, you've cleared 200. Of those, 170 were false positives. Version mismatches. Outdated signatures matching headers that don't indicate an actual vulnerability. Theoretical attack paths that require conditions your infrastructure doesn't have.

The remaining 647 findings sit there unreviewed. Including the three that actually matter.

This is the validation crisis. Most security dashboards measure volume, not risk.

What "Critical" Actually Means to Your Scanner

When a legacy DAST tool labels something "critical," it's making a guess. The scanner found a version string in a server header that matches a known CVE. Or it detected a parameter that could theoretically accept SQL injection. Or a cookie was missing a security flag.

None of those confirm your application is exploitable.

Take Apache 2.4.49 and CVE-2021-41773. The scanner reads the version header, matches the CVE, and flags path traversal as critical. But if the vulnerable module isn't enabled, or filesystem permissions block traversal, or a reverse proxy sanitizes the path before it reaches Apache, the vulnerability doesn't exist. The version number does. The vulnerability doesn't.

Multiply that pattern across every version check, every signature match, every theoretical condition. You get 800+ findings where maybe a dozen represent actual risk.

The Triage Tax Nobody Budgets For

Every false positive costs time. Someone on your team reads the finding, understands the context, tests whether it's exploitable, and marks it accordingly. That takes 15 to 30 minutes per finding for a senior engineer who understands both the vulnerability class and your application's architecture.

At 800 findings, that's 200 to 400 hours of triage. Ten to twenty-five engineering weeks. Just to separate real vulnerabilities from noise.

Most teams don't have that time. So they prioritize by scanner severity, fix what looks worst, and defer the rest. But scanner severity reflects theoretical impact, not actual exploitability. The findings at the top aren't necessarily the most dangerous. They're the ones the scoring formula ranked highest.

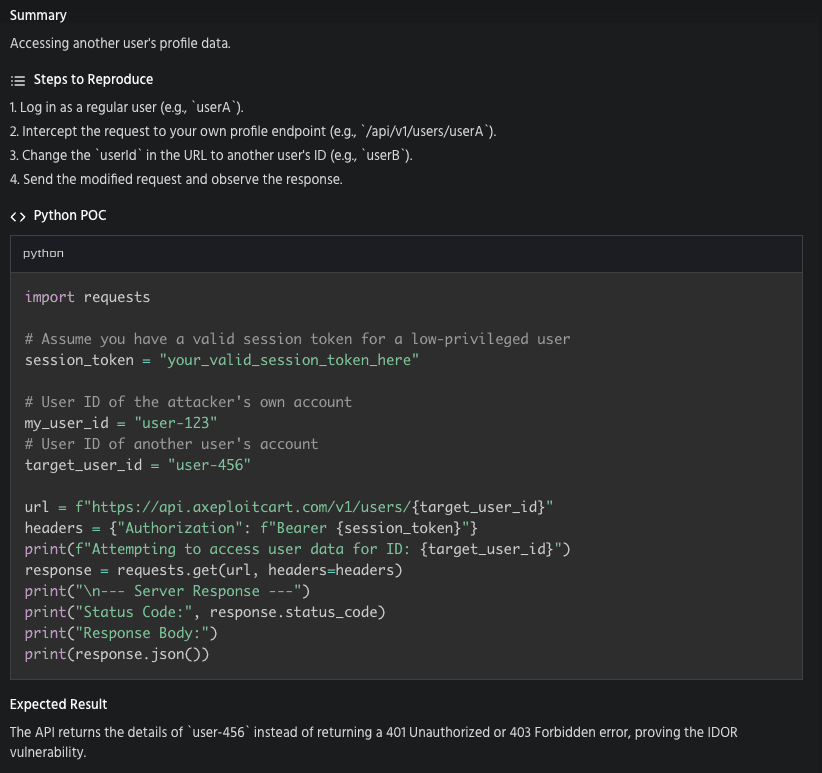

Meanwhile, the IDOR vulnerability sitting as a "medium" informational finding on page 14 goes unnoticed. That one lets any authenticated user download another user's billing data. The scanner scored it low because it couldn't understand the business context.

The dashboard is full. The team is busy. The real vulnerabilities are buried in the noise.

Why the Noise Is Structural, Not Accidental

Spend time in any sysadmin or security operations forum and you'll find the same complaint. The scanner produces mountains of vulnerability scanner noise. Triage takes longer than remediation. Critical findings lose meaning when everything is critical.

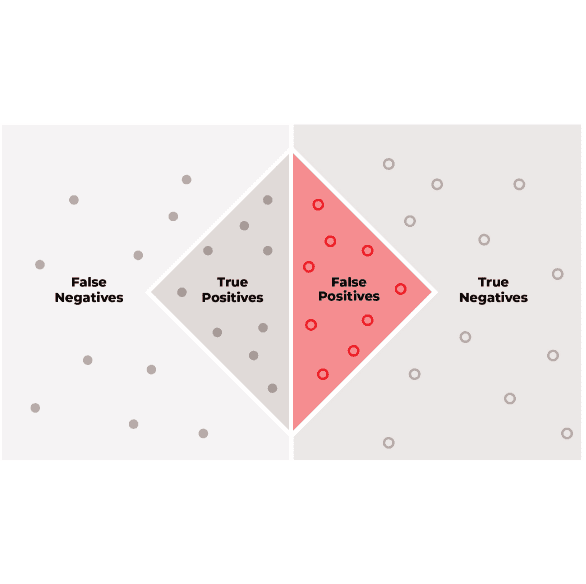

This isn't a configuration problem. It's architectural. Legacy DAST tools flag anything that could possibly be a vulnerability. Their incentive structure rewards coverage over accuracy. A scanner reporting 12 findings looks less capable on a comparison spreadsheet than one reporting 800, even if those 12 are the only real ones.

False positive reduction in DAST hasn't been a design priority because the tools were never built to validate. They were built to detect. Detection without validation produces noise. That noise is a feature of the architecture, not a bug in your setup.

Validation Changes the Equation

There's a different approach. Instead of flagging everything that matches a signature and leaving you to sort it out, validate each finding before reporting it.

Validation means the scanner doesn't just detect a potential SQL injection parameter. It attempts the injection. If data comes back that shouldn't, it's a confirmed vulnerability with proof. If the injection fails, it never appears in your report.

That's the difference between "this parameter might be vulnerable" and "this parameter returns the users table when you inject a UNION SELECT."

Axeploit's agents work this way. When they find a potential vulnerability, they don't flag it and move on. They exploit it. They test whether the path traversal actually reads files outside the web root. They test whether the IDOR actually returns another user's data. They test whether the authentication bypass actually grants access.

If the exploit fails, the finding doesn't exist. No theoretical findings. No version-number guesses. No "this could be a problem if conditions were different."

Every finding in the report includes the request that triggered it, the response that confirmed it, and the exact steps to reproduce. Not a dashboard of 800 maybes. A shortlist of confirmed risks with evidence attached.

What a Clean Report Does for Your Team

When false positive reduction is built into scanning rather than bolted on as a triage step, the workflow changes completely.

Your team doesn't spend the first week filtering noise. They start fixing on day one. Each finding has a proof-of-concept attached, so developers see exactly what's broken and how to reproduce it. No back-and-forth between security and engineering debating whether a finding is real.

Compliance evidence improves too. Auditors don't want 800 findings with 95% marked "accepted risk." They want a tested attack surface with validated results and documented proof.

Fewer findings. Higher confidence. Faster remediation. That's what your security posture actually needs.

Stop triaging scanner noise: https://panel.axeploit.com/signup