High-velocity development often referred to as "vibe coding" leverages tools like Cursor and LLM-assisted workflows to push innovation at unprecedented speeds. However, this rapid prototyping introduces a critical vulnerability: security is frequently outpaced by deployment.

With the widespread adoption of prompt-driven development, the risk of deploying vulnerable code is compounding. LLMs naturally prioritize functional output over secure architecture, and the seamless nature of copy-paste workflows often bypasses critical human review. In this environment, foundational security knowledge is not optional. Understanding vulnerabilities like SQL Injection (SQLi) is mandatory for building production-ready systems.

The Mechanics of SQL Injection in an AI Context

At its core, SQL injection is a vulnerability that allows attackers to manipulate database queries by slipping malicious execution logic through user inputs. This typically occurs when applications implicitly trust incoming data, directly concatenating user input into SQL query strings rather than properly sanitizing it.

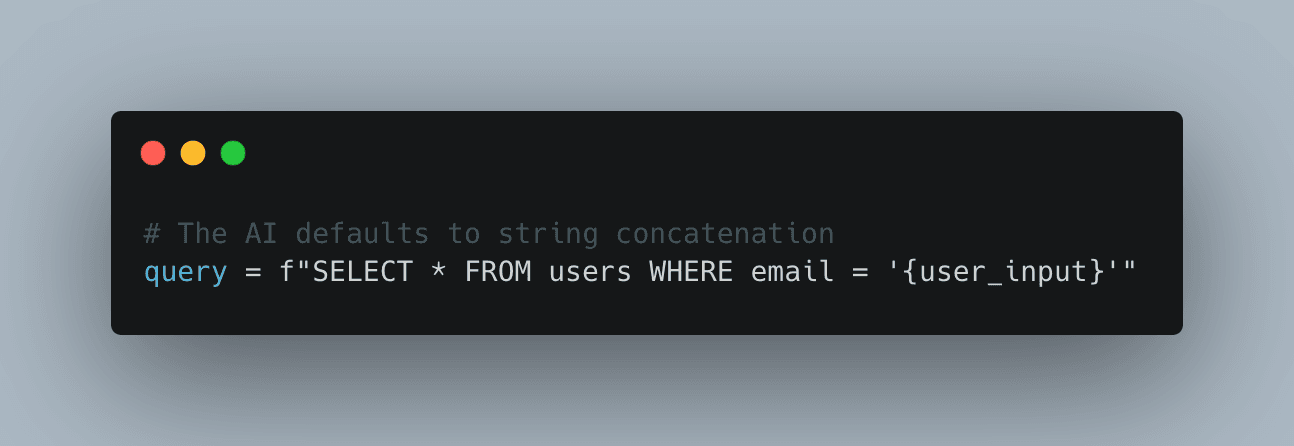

The danger of AI-generated code is that it often defaults to these easily exploitable patterns. Because tools like Cursor lack an intrinsic understanding of your application’s specific threat model, a vague prompt like "Write a SQL login function" will frequently yield insecure, baseline code.

Vulnerable AI-Generated Example:

If an attacker provides ' OR '1'='1 as the input, the resulting query exposes all user records, resulting in a complete authentication bypass.

To properly audit generated code, developers must understand the various ways attackers weaponize these flaws. While Classic (In-band) injections are the most common providing the attacker with immediate database feedback threat actors also use Blind and Time-Based injections to infer data through application behavior or intentional execution delays. In more complex scenarios, Union-Based injections combine queries to extract restricted data, while Second-Order injections store the malicious input to be executed later by background jobs.

Defensive Architecture: Securing the Codebase

Securing applications against SQLi requires a defense-in-depth approach. You cannot rely on the AI to implement these by default; they must be actively enforced during code review and refactoring.

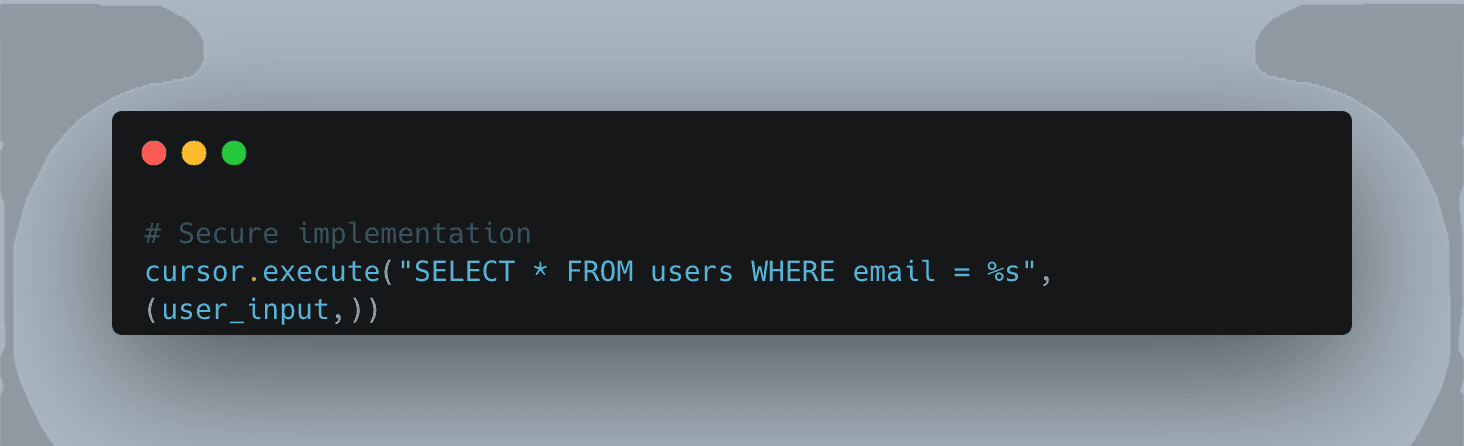

- Enforce Parameterized Queries (Critical): This is the most effective defense against SQLi. Parameterization ensures the database treats user input strictly as literal data, never as executable code.

- Eliminate String Concatenation: Systematically reject any code that constructs database queries by concatenating raw strings with user variables.

- Strict Input Validation: Enforce expected data formats (e.g., validating that an email follows standard syntax or an ID is strictly numeric). Always prefer strict allowlists over blocklists.

- Leverage ORMs Safely: Object-Relational Mappers (like Prisma or SQLAlchemy) handle parameterization under the hood, heavily reducing risk. However, they must be audited to ensure raw query bypasses aren't being misused.

- Apply the Principle of Least Privilege: Ensure the database credentials used by your application only have the minimum permissions necessary. An application should never query the database using administrative privileges.

Secure Prompting Practices

Mitigating AI-generated vulnerabilities starts at the prompt level. The quality and security of the generated code are directly proportional to the constraints you define. Treating security as an explicit requirement forces the LLM to generate safer baseline architecture.

Instead of asking the AI to simply "fetch user data," establish a structured, security-first workflow:

- Explicit Security Directives: Use targeted prompts like, "Write a secure SQL query using parameterized inputs and strict validation to prevent SQL injection."

- Establish Tool-Level Guardrails: If your AI assistant allows for global rules (like Cursor's "Rules for AI"), explicitly state: "Never generate SQL using string concatenation. Always use prepared statements."

- Mandatory Human Review: Treat AI output as untrusted third-party code. Before merging, verify that inputs are not embedded directly in queries and that proper validation logic is present.

SQL injection is not a legacy web issue; it is a modern risk actively amplified by AI-assisted development. While speed is the primary advantage for today's developers, that velocity quickly becomes a massive liability without a rigorous, security-aware workflow.