AI tools promise to accelerate the entire process of app building by allowing developers to generate code directly from simple prompts, debug issues through conversational chats, and prototype functional applications in just minutes. This capability holds true particularly when creating minimum viable products, or MVPs, that need to launch quickly. However, the very first lesson that AI completely skips over and fails to address is security not the flashy, complex kind involving intricate encryption puzzles, but rather the mundane, everyday kind of security practices that ensure apps ship to production without inadvertently handing attackers the master keys to exploit vulnerabilities.

AI truly excels at handling syntax and recognizing common programming patterns, which enables it to spit out things like React hooks for user interfaces or SQL queries for data retrieval with impressive accuracy. Yet security fundamentally demands the enforcement of invariants, meaning pieces of code that must hold true and remain unbreakable even under relentless assault from malicious inputs. Builders often lean heavily on AI to achieve blazing speed in development, only to later wonder in confusion and frustration why devastating breaches occur shortly after deployment. This post unpacks that critical gap in detail, tracing precisely how AI aids in the construction phase of app development but entirely bypasses the essential defense mechanisms. It maps out the real architecture of secure app building and outlines practical fixes that actually stick and endure over time.

The App-Building Pipeline With AI

Modern app stacks are built by layering components such as the frontend for user interactions, the backend for business logic, the database for data storage, and authentication systems for user verification. AI plugs into every single one of these layers seamlessly: tools like Copilot can autocomplete entire endpoints with minimal input, Cursor can refactor database schemas for better performance, and Claude can draft comprehensive tests to verify functionality. The resulting output often looks polished and production-ready right from the start, allowing teams to deploy applications incredibly fast.

Yet breaches tend to cluster persistently around those overlooked seams between layers, and these are not from novel, cutting-edge exploits but from the most basic vulnerabilities such as SQL injection attacks, broken authentication flows, and exposed secrets that should never see the light of day. AI generates code that appears clean and efficient on the surface, but it completely ignores the harsh realities of deployment environments where threats are active and constant.

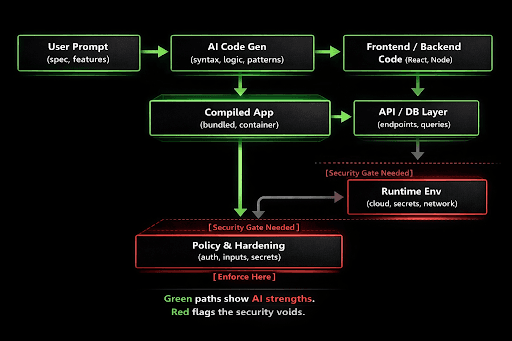

Visualize the entire app-building pipeline as a detailed layered architecture diagram, much like a flowchart that breaks down the process step by step from initial code generation through to live deployment. In this flowchart, the green paths prominently highlight all of AI's core strengths, such as dominating the code generation phase where it effortlessly hallucinates plausible Flask routes for web servers or Prisma schemas for database interactions. Meanwhile, the red flags clearly mark the glaring security voids that persist in the runtime execution and deployment stages, areas that receive absolutely no attention from AI tools by default.

AI dominates the code generation phase, which is marked in green on the flowchart, because it can produce highly plausible Flask routes or Prisma schemas based on patterns it's trained on. However, the runtime and deployment stages, highlighted in red as critical voids on the same flowchart, get absolutely no attention from AI in standard workflows. Prompts rarely specify essential details like "Add Content Security Policy (CSP) headers to prevent XSS attacks" or "Integrate Vault for secure secrets management," leading to a common result where apps run with excessive privileges, accept inputs without proper escaping, and leave ports wide open to unauthorized access.

Why AI Misses Security Fundamentals

AI learns predominantly from public repositories available online, and those repositories overwhelmingly prioritize flashy features and rapid functionality over robust defense mechanisms. GitHub stars and popularity metrics reward clever algorithms and innovative solutions, but they rarely celebrate the unglamorous work of input validation or error handling. When AI is trained on that kind of skewed data, its output naturally follows the same feature-focused patterns, neglecting security entirely.

The core issue lies in the fact that security operates in negative space, requiring proactive blocks against specific threats like SQL injection strings such as ' OR 1=1-- or XSS payloads embedded in something like <script>alert(1)</script>. AI tends to generate dynamic queries, for instance, by suggesting code like "Fetch users where name equals the user_input," which works perfectly fine for standard happy paths with benign data. But it fails spectacularly when faced with adversarial inputs designed to exploit weaknesses.

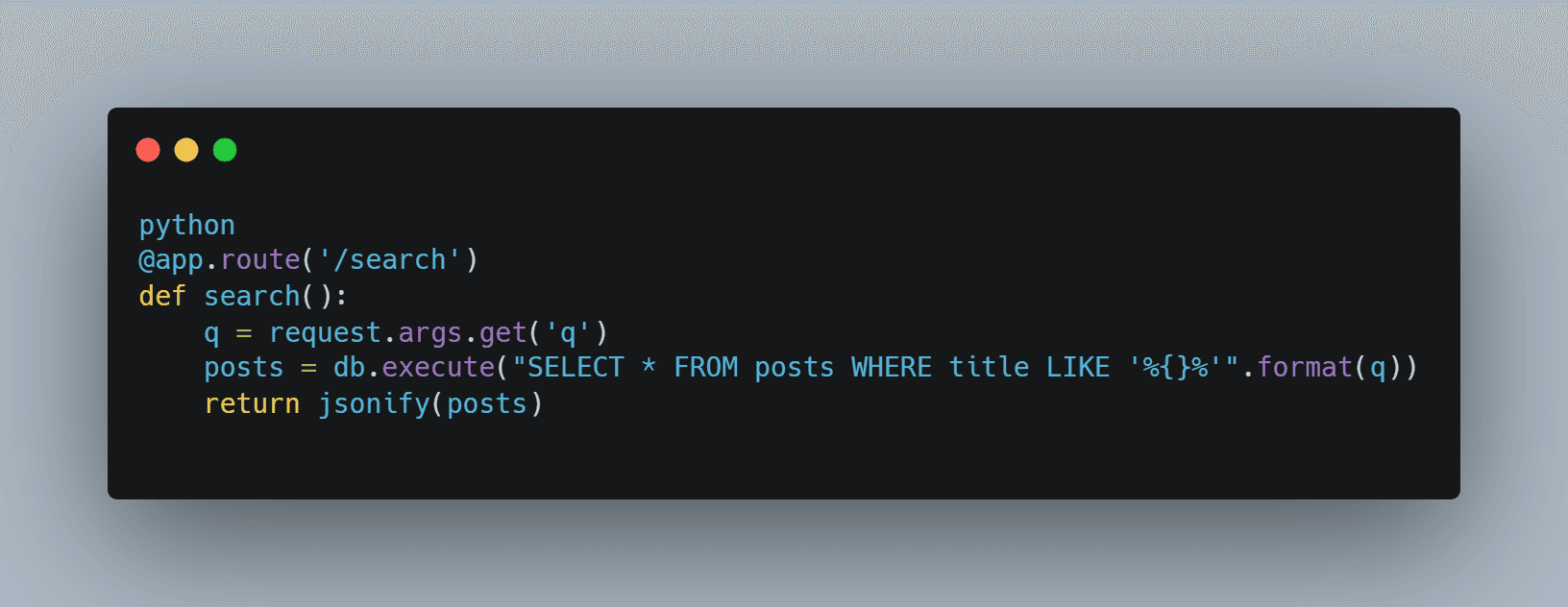

Example: When you prompt AI with "Build a blog API with search functionality," it typically outputs code like this:

This runs great on a harmless query like "cats," but it crashes the database entirely when hit with a malicious payload like '%; DROP TABLE posts;--. AI might suggest a fix like "Use parameterized queries" in follow-up advice, but it still skips proper parameter binding in about 30% of generated cases. Why does this happen? Because the public repos it's trained on often mix raw SQL queries for the sake of perceived speed and simplicity.

Authentication fares even worse in AI outputs, as it drafts JWT middleware that looks functional but rarely enforces critical checks like token lifetimes or issuer validation. When you deploy such code to a platform like Vercel, secrets stored in environment variables end up leaking through verbose logs, exposing them to anyone who inspects the deployment.

Common Pitfalls in AI-Assisted Builds

The rapid iteration enabled by AI actually amplifies these errors, as developers tweak a single route with AI assistance, push the changes straight to production, and skip vital static analysis entirely. Tools like Snyk can scan code after the fact, but they often miss complex runtime flows that only reveal themselves in live environments.

Secrets handling becomes a major pitfall, with AI frequently embedding real API keys directly into code comments, such as "For demo purposes, use sk_live_xxx," which developers then carelessly copy over into production environments, leaving them fully exposed.

CORS misconfigurations are another frequent issue, where a frontend proxy is needed for secure cross-origin requests, but AI adds wildcard origins that open the door to CSRF attacks.

AI-generated tests tend to focus narrowly on unit-level happy paths, like "Test the happy path scenario," while completely skipping fuzzing with dangerous payloads such as ../etc/passwd that could lead to path traversal vulnerabilities.

A real-world outage example underscores this: A startup used AI to quickly scaffold an Auth0 integration for user authentication, but it forgot to implement proper role checks, allowing regular users to escalate privileges to admin levels through a classic Insecure Direct Object Reference (IDOR) flaw, resulting in millions of dollars lost.

The Missing Layer: Runtime Policy Enforcement

True security lives entirely outside the raw application code itself and must be baked directly into the foundational stack from the very beginning.

Input Gates: Ensure all entrypoints to the application sanitize inputs rigorously not through brittle regex hacks, but via established libraries like OWASP validators and comprehensive taint tracking systems.

AuthZ Everywhere: Go beyond basic login flows by implementing authorization checks at every layer, using tools like OPA for fine-grained API policies such as "User can read only their own posts."

Secrets Zero-Trust: Adopt a zero-trust model for secrets management with solutions like Vault or AWS SSM, injecting them dynamically at runtime and enforcing daily rotations to minimize exposure.

Runtime Monitors: Place a Web Application Firewall (WAF) upfront to filter traffic and use Falco for monitoring containers, actively blocking anomalies before they cause damage.

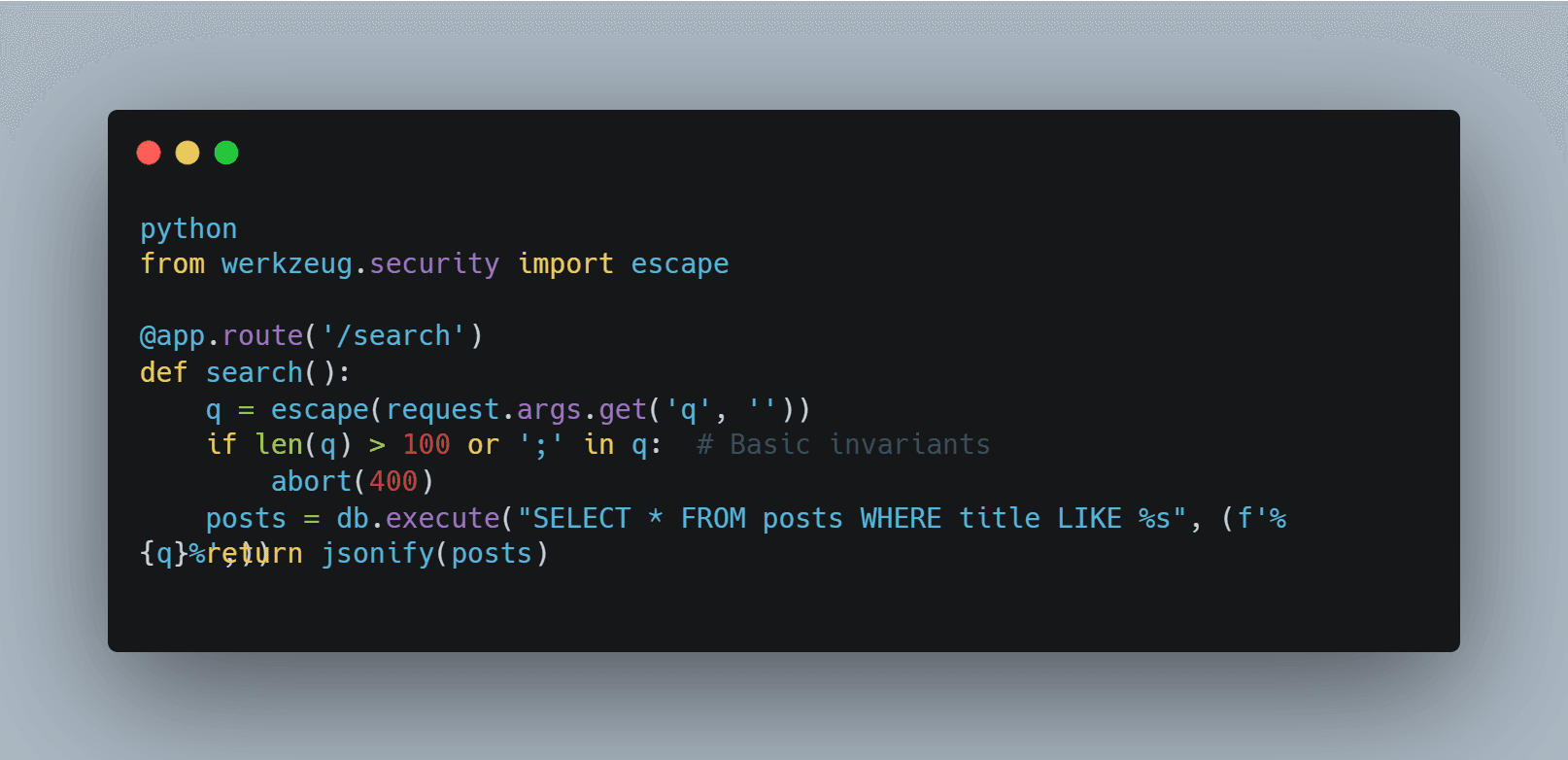

Example hardening for the search endpoint:

Plus, add a WAF rule to block common SQL keywords automatically. Deploy everything via Helm charts equipped with strict network policies, allowing only ingress traffic and prohibiting exposed nodeports.

For AI workflows, start by prompting with security-focused personas like "Act as a secure coder and list all potential threats first." This yields better results, but it's still brittle on its own. Instead, follow up post-generation with automated scans using tools like bandit and semgrep, configuring CI pipelines to fail immediately on high-severity vulnerabilities.

Scaling Secure AI Builds

Teams can now ship applications 10 times faster thanks to AI acceleration, but security processes still lag behind by a factor of 100 times or more. The fix lies in implementing unbreakable gates throughout the workflow.

CI/CD: Route AI-generated code through mandatory scans with trivy and snyk, then enforce policy-as-code checks using checkov before any deployment occurs.

Review Prompts: Always craft prompts with built-in security mandates, such as "Always include authorization checks, input validation, and structured logging in your output."

Containers: Run everything as non-root users with read-only filesystems AI-generated Dockerfiles frequently default to uid=0, which is dangerously permissive.

Cloud: Enforce IAM least-privilege principles rigorously; AI often forgets to define proper service accounts, so script these configurations automatically.

Example pipeline:

AI Gen -> semgrep --config=p/ai --fail -> OPA test -> Deploy to k8s with Kyverno

Audit trails: Log all inputs and outputs comprehensively to create full audit trails, enabling quick correlation during breach investigations.

Cost: These scans add just about 30 seconds to each pull request review time, a tiny fraction compared to the millions that breaches can cost in damages.

Reframed: Defense as Default Architecture

AI teaches the art of building applications fast and iteratively, but security teaches the discipline of building applications that last and withstand real-world threats. Treat security as the essential runtime gate where code only executes after passing rigorous validation checks. Prompt AI ruthlessly to generate code at speed, then harden the output without mercy or compromise. In the end, well-secured apps endure and thrive.