Most vulnerability scanners sold today still run on the same architecture designed fifteen years ago. They crawl your site, replay a list of known attack signatures, and hand you a PDF full of findings you need to sort through yourself.

That approach is called DAST. Dynamic Application Security Testing. It worked well enough when web applications were simpler, release cycles were quarterly, and every company had a security team to babysit the tooling.

None of those things are true anymore.

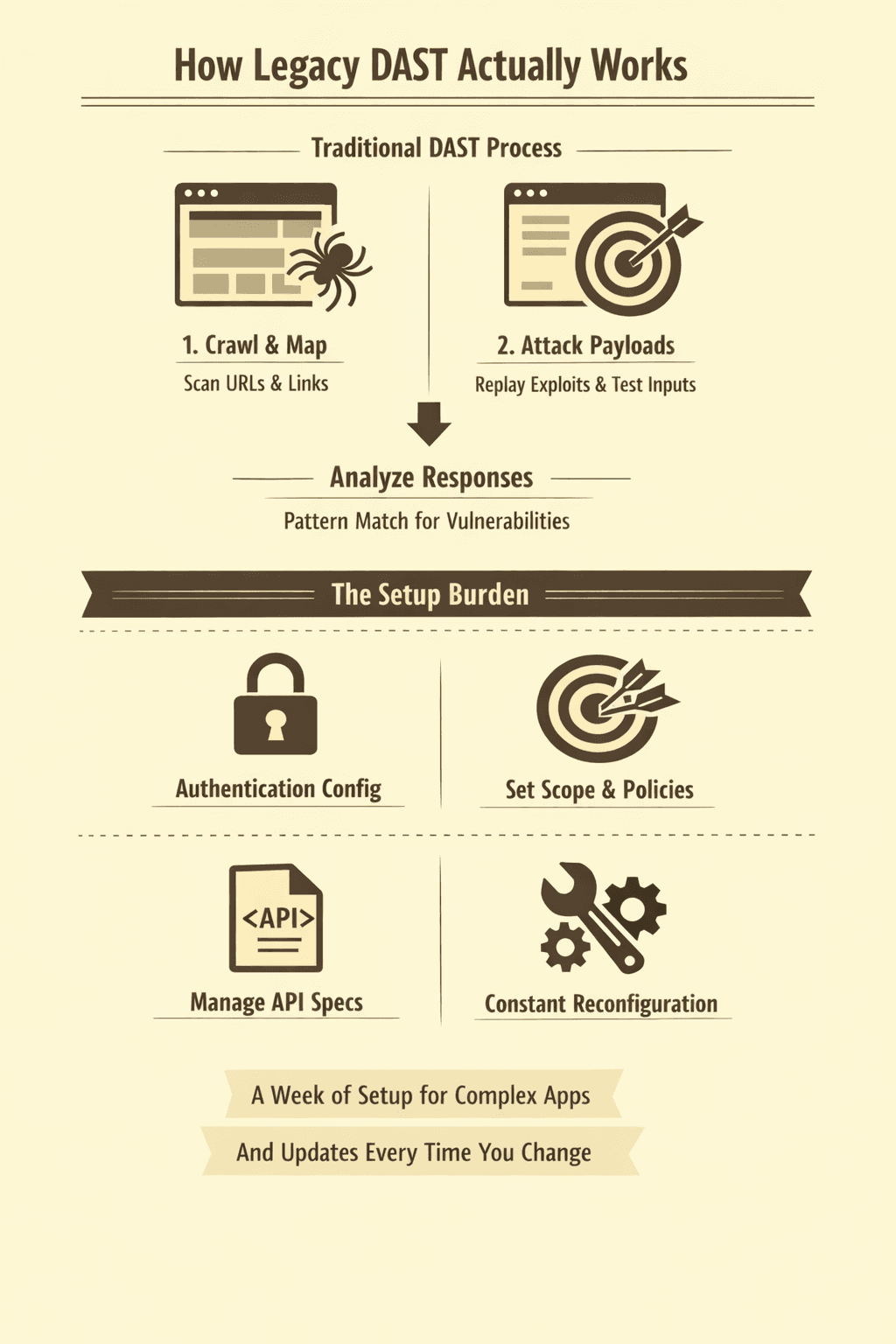

How Legacy DAST Actually Works

A traditional DAST scanner follows a fixed process. It starts by crawling your application, clicking links, and mapping URLs. Then it replays a library of known attack payloads against the inputs it discovered. SQL injection strings, XSS payloads, directory traversal sequences. Each payload is pattern-matched against expected server responses to flag potential issues.

This sounds reasonable until you look at what it requires from you.

You need to configure authentication so the scanner can reach anything behind a login page. You need to define scope so it doesn't hammer third-party services or your production database. You need to set up scan policies, choose which attack categories to enable, and maintain an API specification if you want coverage beyond what the crawler discovers on its own.

For a modern application with 60+ API endpoints, that initial setup is a week of engineering time. And every time your application changes, the configuration needs to catch up.

The Parts That Break Quietly

Signature-based scanning has a shelf life. Legacy DAST tools rely on a curated library of known attack patterns. When a new vulnerability class emerges, someone needs to write a detection rule, test it, and push it to your scanner. That lag can be weeks or months. Meanwhile, your application is exposed to attacks the scanner doesn't know about yet.

Then there's the crawler problem. Traditional crawlers follow links and submit forms. They don't understand JavaScript-heavy SPAs. They can't navigate multi-step workflows that require specific sequences of actions. They miss API endpoints that aren't linked from any page. If your frontend is built with React, Vue, or any modern framework, a basic crawler sees a fraction of your actual attack surface.

Authentication is another failure point. Most legacy tools need you to record a login macro or provide session tokens. Those tokens expire. The macro breaks when you redesign your login flow. Some tools can't handle MFA, OAuth flows, or magic-link authentication at all. Anything behind the login page stays untested until someone notices and reconfigures the scanner.

Add it up: manual configuration, stale signatures, blind crawling, fragile authentication. The scanner runs. It produces a report. But the report covers maybe 40% of what an actual attacker would probe.

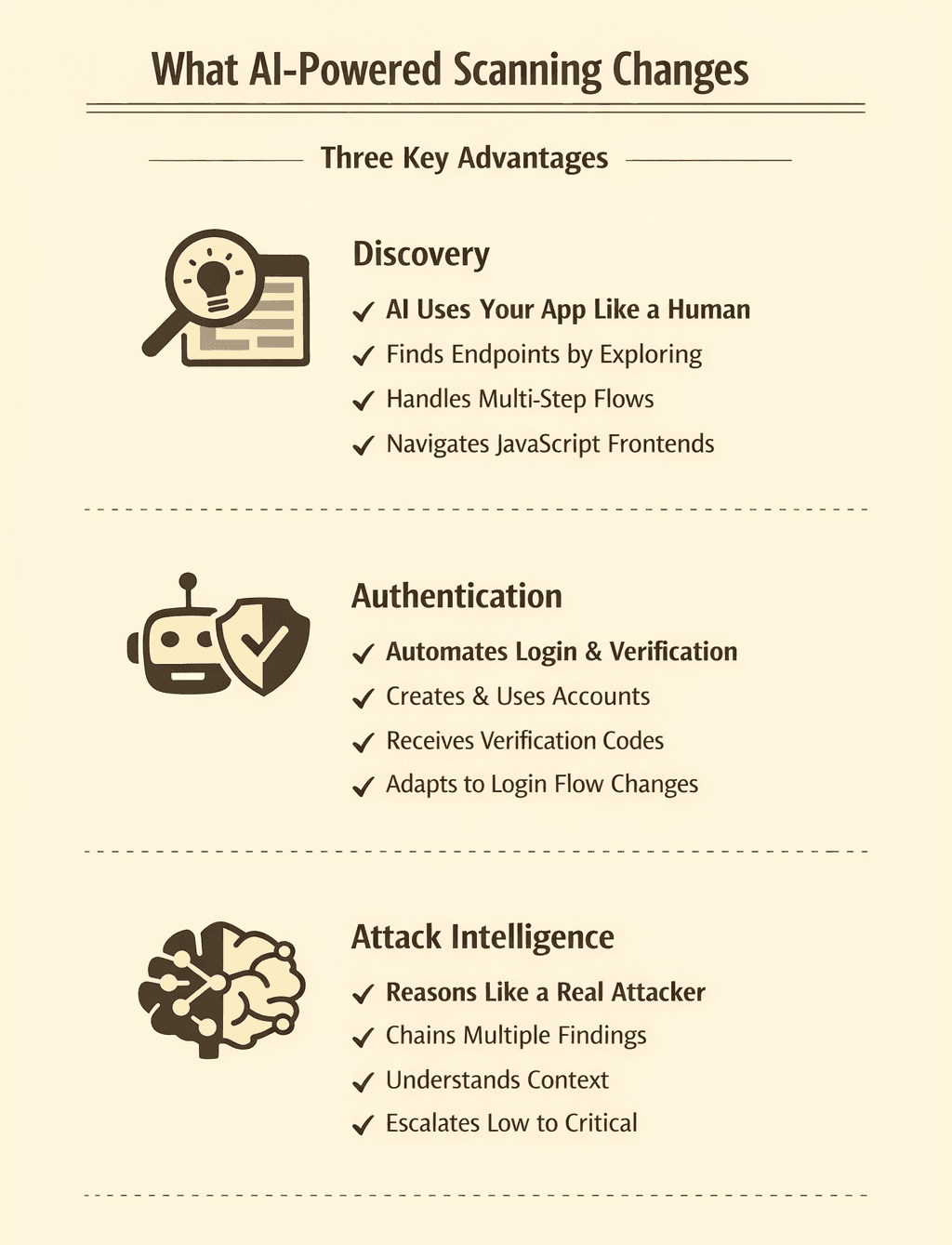

What AI-Powered Scanning Changes

An AI vulnerability scanner doesn't follow a script. It makes decisions.

Instead of crawling links and replaying canned payloads, an LLM-powered engine interacts with your application the way a human pentester would. It reads the page, understands context, decides what to click, what to type, and what to try next based on what it observes.

That difference matters in three specific ways.

First, discovery. AI agents find endpoints by using your application, not by parsing HTML for href tags. They fill out forms, complete multi-step flows, observe network requests, and follow the paths your users actually take. JavaScript-heavy frontends aren't a blind spot. They're just another interface to navigate.

Second, authentication. Instead of recorded macros and manually configured tokens, LLM-powered scanners can create their own accounts. Axeploit's agents use their own email addresses and phone numbers to sign up, receive verification codes, and authenticate autonomously. When your login flow changes, the agents adapt. Nothing breaks.

Third, attack intelligence. Rather than matching signatures from a static database, AI agents reason about what they're seeing. They spot a file upload and test for path traversal. They notice an ID parameter and check for broken access control. They chain findings together the way a real attacker would, testing whether a low-severity issue becomes critical when combined with another.

The Cost Difference Is Structural

Legacy DAST tools have two price tags. The one on the website and the one hiding in your engineering team's backlog. Configuration, maintenance, signature updates, re-testing after every deploy. That overhead runs $2,000-5,000 per year for a mid-size application before you count the subscription itself.

AI-powered scanning collapses that overhead to zero. You submit a URL. The scanner handles discovery, authentication, and attack selection autonomously. When your application changes, the next scan adapts. No reconfiguration. No stale signatures. No integration tax.

With Axeploit, that's $99 for a one-time audit or $199/month for continuous scanning. The full price. Not a starting point that balloons once you factor in the engineering time to keep the tool running.

Which Approach Fits Your Team?

If you have a dedicated security team that maintains scanner configurations as part of their job, legacy DAST still works. It's well-understood, well-documented, and mature.

If you're shipping fast, building with modern frameworks, and don't have a security engineer maintaining tooling, legacy DAST creates more work than it eliminates. You need a scanner that keeps up without being babysat.

That's the actual difference. Not just what the tools find. It's how much of your time they consume to stay useful.

Try a zero-config scan: https://panel.axeploit.com/signup