The Interface That Doesn't Know You're Frustrated

You've been clicking through the same broken checkout flow for the third time. Your heart rate is up. Your mouse movements are aggressive and imprecise. You're about to abandon the cart. The application has no idea. It's showing you the same button in the same shade of blue at the same size as when you were in a good mood twelve minutes ago.

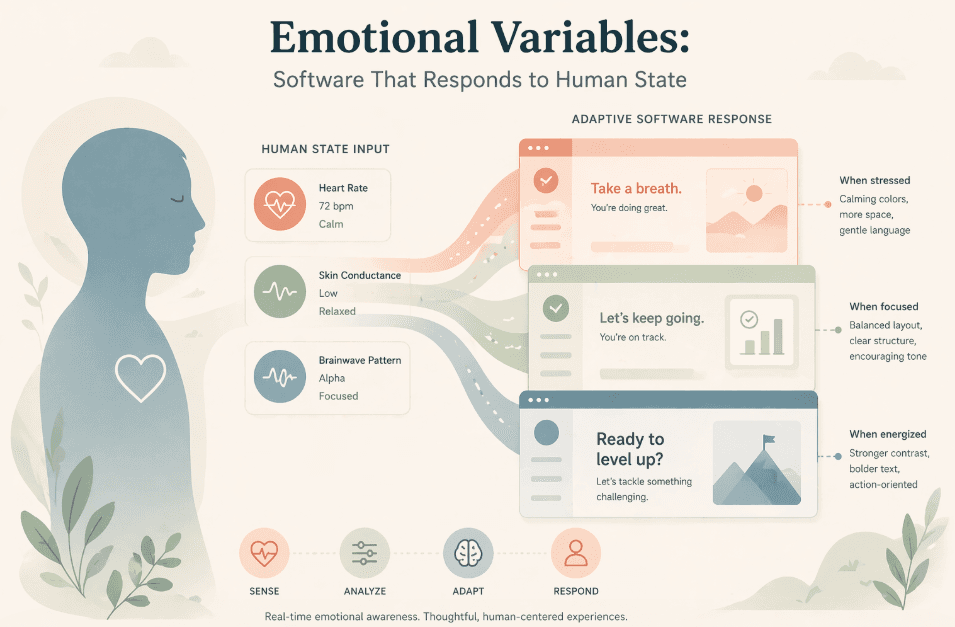

This is the problem that emotional variables in data structures are designed to solve. Not someday, theoretically. Right now, with tools and sensors that are already in the hands of millions of users.

What Emotional Variables Are

An emotional variable is a dynamic value in your application's state that represents an inferred or measured aspect of a user's emotional or physiological state. Like any other variable, it can be read, computed on, and used to drive interface decisions. Unlike other variables, it changes based on signals from the human using the software rather than actions the human explicitly performs.

Types of Emotional Variables

Valence: A measure of positive or negative emotional tone. Range typically -1.0 to 1.0. Derived from text sentiment analysis, interaction patterns, or explicit mood inputs.

Arousal: A measure of activation or excitement level. High arousal might indicate stress or enthusiasm; low arousal might indicate boredom or calm. Often derived from interaction speed, mouse velocity, or biometric signals.

Frustration Index: A composite score derived from error rates, repeated actions, abandonment patterns, and session duration on problem areas. Particularly actionable for product teams.

Cognitive Load Estimate: An inference about how much processing the user is currently doing, derived from response latency, reading behavior, and UI complexity exposure.

Where the Signals Come From

The practical constraint with emotional variables is signal source. Biometric sensors exist but aren't ubiquitous. What's more interesting and more immediately usable is the inference chain from behavioral signals that are already being collected.

Mouse and Touch Dynamics

Mouse velocity, pressure on touchscreens, hesitation patterns, and click precision are all measurable and all correlate with emotional states in well-documented ways. Rapid, imprecise movements correlate with frustration. Slow, deliberate movements often indicate focus or confusion. Libraries for capturing and processing these signals at the client level are mature and readily available.

Text Input Patterns

How users type is as informative as what they type. Frequent backspacing, sudden pauses, or unusually fast typing all carry emotional signal. In conversational interfaces, sentiment analysis of the text itself provides direct valence data.

Session Behavior Patterns

Users who repeatedly visit the same error state, who scroll frantically without clicking, who open and immediately close the same dialog these patterns are frustration signals that can be detected in existing analytics data and computed in real time.

The most powerful realization: you probably already have most of the data you need to compute basic emotional variables. You're just not doing it yet.

Integrating Emotional Variables into Data Structures

The architectural approach is cleaner than it might sound. Emotional variables live in your application state alongside every other piece of state. The difference is in how they're updated event-driven, computed asynchronously and how other parts of the application subscribe to them.

A Simple Implementation Pattern

Consider a user state object that includes an emotionState field: { userId, sessionId, frustrationIndex, valence, arousalLevel, lastUpdated }. This object updates on a configurable interval using a signal processor that reads behavioral events from the session. UI components subscribe to changes in emotionState and adapt accordingly.

What does 'adapt accordingly' look like in practice? A high frustration index triggers a proactive help modal. High cognitive load estimate reduces information density on the page. Low arousal on a checkout page triggers a subtle urgency signal or social proof element. The variations are limited only by product thinking, not technical feasibility.

The Psychology Behind the Engineering

This isn't a new idea. Affective computing as a field has been around since the 1990s. What's new is the combination of factors making it practically accessible: ubiquitous sensors, AI-powered inference models that run at the client level, and an engineering culture that's increasingly comfortable with complex, reactive state.

The psychological principles are grounded: emotional state measurably affects decision quality, attention, and task completion rates. Interfaces designed with awareness of these states consistently outperform static interfaces on engagement and conversion metrics. The frustration that kills conversion isn't imaginary it's a measurable physiological state that good software can detect and respond to.

Ethics and Consent: The Necessary Conversation

Any serious discussion of emotional variables must include this dimension. Inferring and acting on a user's emotional state without their knowledge raises real ethical questions. The answers aren't all the same.

Using a frustration index to trigger a help modal: low risk, high user value, probably no consent required. Using emotional data to optimize for addictive engagement patterns: high risk, potentially harmful, ethically unacceptable. Using emotional data to personalize communication tone: moderate complexity, benefit depends on implementation.

The engineering community building in this space has a responsibility to think about these distinctions clearly. The capability to infer emotion doesn't confer the right to use it any way that increases a metric. Design with user benefit as the explicit objective, not user manipulation.

Axeploit's perspective is clear: the most durable software respects the humans it serves. Emotional variables, used ethically, are a way to build software that serves people better. Used cynically, they're a new vector for harm.