In February 2026, Amazon Threat Intelligence published a report about a financially motivated attacker who compromised over 600 FortiGate devices across 55 countries. The attacker was not particularly skilled. AWS assessed them as low-to-medium capability. They did not discover a zero-day. They did not write novel exploit code.

They scanned the internet for exposed management interfaces, tried common credentials, and got in. Then they used commercial AI services to write scripts that parsed stolen configurations, generated attack plans, and organized lateral movement across compromised networks.

That is the new threat model. Not a brilliant hacker in a dark room. A mediocre operator with an AI copilot running at industrial scale.

The Exploits Are Not New. The Scale Is.

Every vulnerability exploited in that FortiGate campaign was preventable with basic hygiene. Exposed admin panels on the public internet. Default or reused credentials. Single-factor authentication on VPN portals.

These are not sophisticated attack vectors. Security teams have been writing "restrict management interfaces" in compliance reports for a decade. The difference is who shows up to exploit the gap now.

Before AI augmentation, scanning the entire internet for exposed FortiGate management ports, brute-forcing credentials, extracting configurations, and planning lateral movement was a months-long operation requiring a capable team. In early 2026, one person did it in 38 days across 55 countries.

Anthropic's threat research tells the same story from a different angle. In September 2025, they documented the first AI-orchestrated cyber espionage campaign: a state-sponsored group that used AI tooling to infiltrate approximately 30 targets, including major tech firms, banks, and government agencies. AI handled 80 to 90 percent of the attack lifecycle autonomously. Reconnaissance, vulnerability identification, exploit generation, credential harvesting.

The humans pointed the system. The AI did the work.

Why This Changes the Math for Everyone

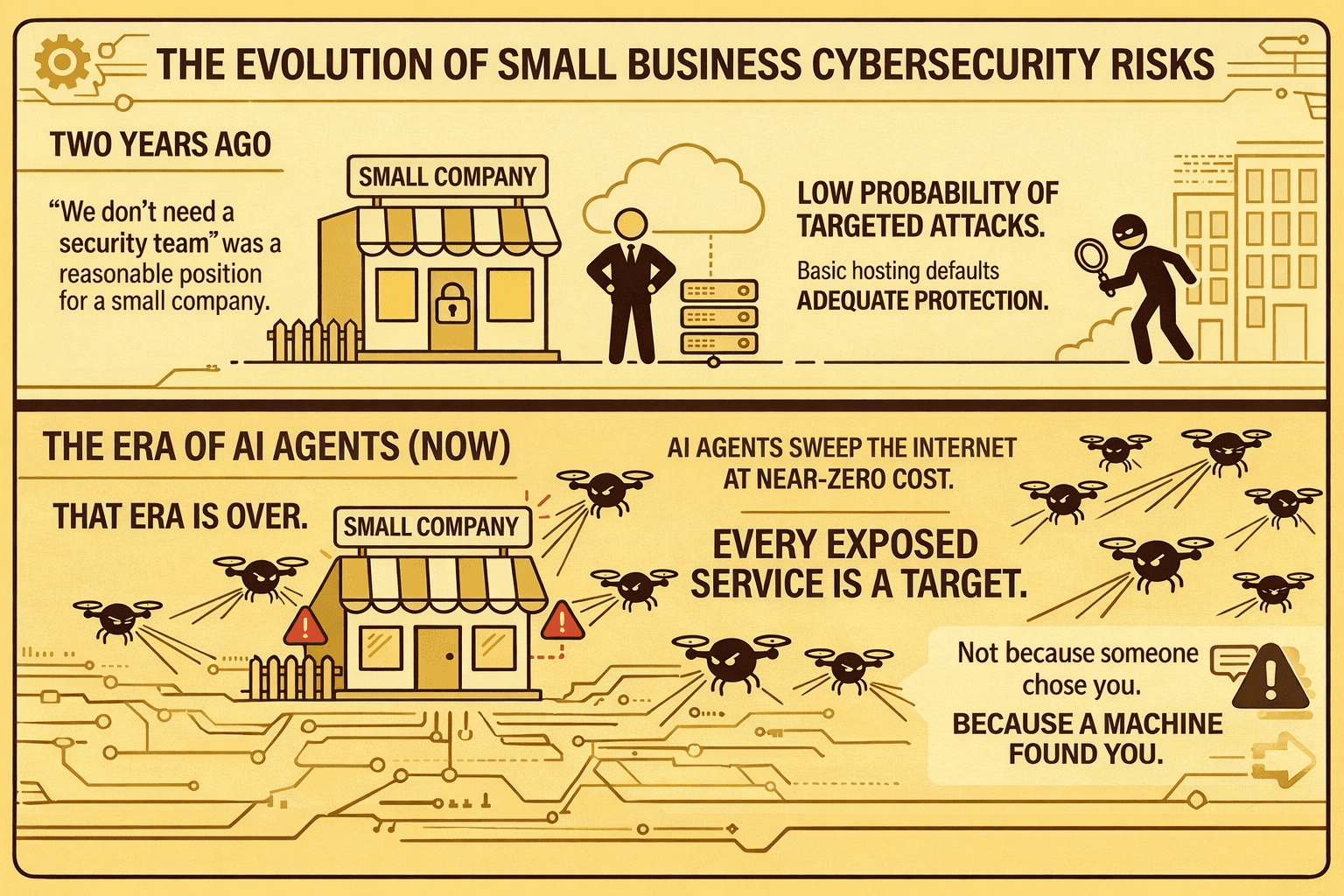

When exploitation required skill, the attack surface was prioritized by value. State-sponsored groups hit high-value targets. Organized crime focused on financial institutions. If you were a 20-person startup with a React app and a Postgres database, the probability of a targeted attack was low.

That calculus breaks down when the attacker's cost per target approaches zero.

An autonomous agent does not decide whether your application is "worth" attacking. It scans, finds an exposed endpoint, and probes. It costs the attacker nothing to include you in a sweep of 10,000 targets. You are no longer protected by obscurity or by being small.

The FortiGate campaign is a clear example. The attacker was not selectively targeting critical infrastructure. They were vacuuming up everything with an exposed management port. The AI generated the scripts, parsed the results, and organized the data. Individual organizations were not selected. They were collected.

This is the shift AWS and Anthropic are both describing: the move from targeted hacking to industrialized exploitation. Autonomous attack agents lower the skill floor and raise the throughput ceiling at the same time.

What You Actually Need to Do

The uncomfortable truth is that autonomous attack agents are not exploiting zero-days. They are exploiting the basics you never got around to fixing.

Here is what the AWS report specifically recommends, and it reads like a security 101 checklist:

Lock down management interfaces. Your admin panels, database ports, and configuration endpoints should not be reachable from the public internet. This alone would have stopped the entire FortiGate campaign.

Enforce multi-factor authentication. Single-factor auth on any administrative or VPN access is an open invitation. The brute-force phase of the FortiGate attack worked because MFA was not in place.

Rotate credentials aggressively. Default and reused passwords were the primary entry vector. Not buffer overflows. Not SQL injection. Passwords.

Monitor for anomalous access. AI-augmented attackers move fast once inside. Look for VPN connections from unexpected locations, new admin accounts, and unusual PowerShell activity.

Test your application layer. Network hardening stops the perimeter attack. It does not protect against broken access control, injection flaws, or session management bugs inside your application. If you are shipping code fast, your application logic is the layer most likely to have gaps that no firewall will catch.

The Baseline Just Got Higher

Two years ago, "we don't need a security team" was a reasonable position for a small company. The probability of a targeted attack was low enough that basic hosting defaults provided adequate protection.

That era is over. When AI agents can sweep the internet for low-hanging fruit at near-zero cost, every exposed service is a target. Not because someone chose you. Because a machine found you.

The same AI capabilities powering offense are powering defense. Automated security scanning that understands application context, tests authentication flows, and probes for access control failures without requiring a security engineer to configure every test.

But if your security posture today relies on "nobody's looking at us," that assumption no longer holds.

Start with the basics. Lock your doors. Then test what is behind them.

Run a zero-config security audit on your application: https://panel.axeploit.com/signup