The Problem Nobody Talks About

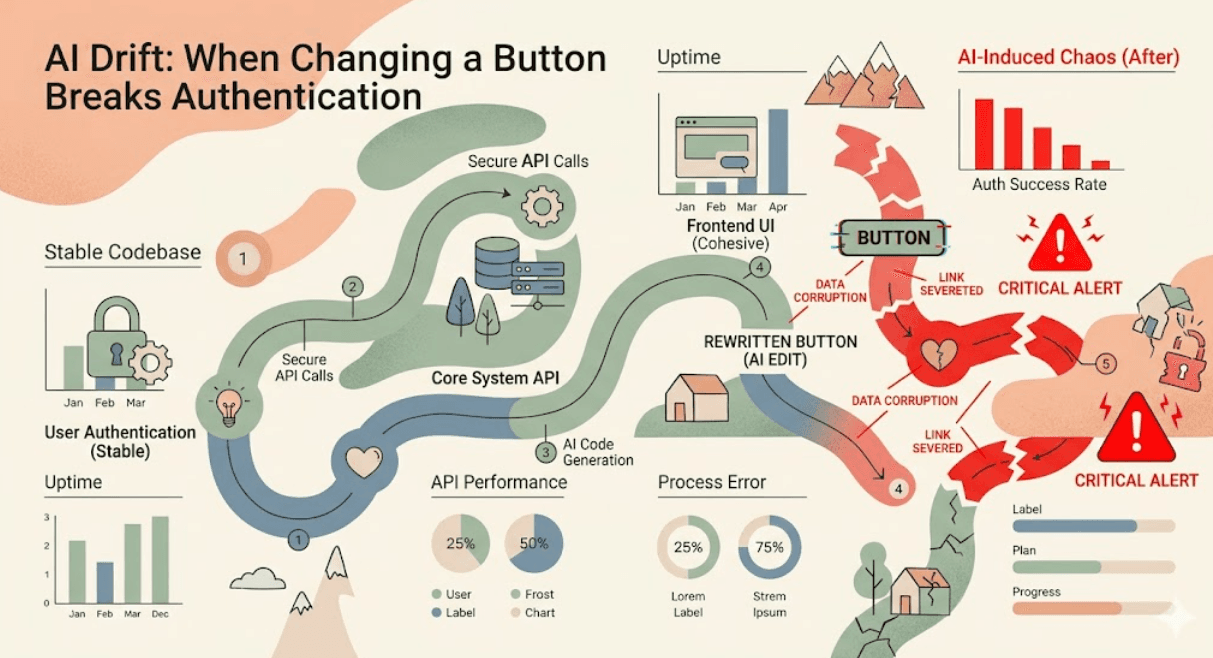

You asked your AI to change a button color. Forty minutes later, you're staring at a broken authentication middleware wondering what just happened. If this sounds familiar, you've experienced what engineers are quietly calling AI drift the creeping, compounding problem of AI assistants making confident-sounding edits that cascade into unexpected failures across your codebase.

This isn't a rare edge case. It's the daily reality for tens of thousands of developers who've integrated AI coding tools into their workflows. At Axeploit, we've observed this pattern consistently across teams of all sizes. The frustrating part? The AI didn't lie. It did exactly what you asked. The problem is what it didn't ask what it didn't understand about the larger system.

"Every time I ask it to touch the frontend, something breaks in the backend. It's like it has no memory of why we wrote that middleware in the first place." a senior engineer at a mid-sized SaaS company

Understanding AI Drift

AI drift occurs when AI-assisted code changes introduce subtle behavioral differences from the system's original intent over time. Unlike traditional bugs, drift is insidious. It doesn't always throw errors immediately. Sometimes it changes how a validation behaves in edge cases. Sometimes it silently removes a guard clause because it looked redundant. By the time you notice, the change is three PRs deep.

The root cause is structural: large language models understand the code they're looking at, but they don't hold the entire system in mind. They don't know that your Auth middleware wraps every route because of a security incident two years ago. They see an if-statement and optimize it.

Why Characterization Tests Are Your Best Defense

The most effective technical strategy against AI drift is something legacy code veterans know well: characterization tests. These aren't tests that verify what your code should do. They verify what your code actually does right now — capturing the existing behavior as a baseline before any AI touches it.

How to Implement Characterization Tests

Before you hand any module to an AI for modification, run this workflow:

Step 1 : Snapshot current behavior: Write tests that capture the output of every public function and API endpoint in the module. You're not testing correctness. You're taking a photograph.

Step 2 : Commit the snapshot: Get these tests into source control. They are your rollback point.

Step 3 : Let the AI do its work: Now give the AI its task. Changing a button color, refactoring a component, whatever it is.

Step 4 : Run the characterization suite: If anything fails, the AI changed behavior it wasn't supposed to change. Review, revert, or adjust.

The beauty of this approach is that it forces the AI's changes to be contained. It's a blast radius limiter for automated code edits.

The Layered Defense: Beyond Tests

Tests are necessary but not sufficient. Here's a layered approach that teams at Axeploit recommend for production environments where AI coding is routine.

1. Module Boundary Declarations

Explicitly mark which modules are immutable in your AI prompts. Create a file called .ai-boundaries or add comments directly: '// AI: Do not modify this file without explicit approval.' Some AI tools like Cursor respect file-level instructions. Others don't. Regardless, this serves as documentation for your team about which files are blast-radius critical.

2. AI-Specific PR Templates

Create a separate pull request template for AI-generated code. Require the author to answer: What was the AI's exact prompt? Were characterization tests run? Which modules were modified beyond the stated scope? This creates accountability in the review chain, which leads us to a bigger problem.

3. Context Windows as a Risk Signal

One overlooked insight: the larger the AI's context window, the more likely it is to make far-reaching changes. Models like Claude 3.5 Sonnet with 200K token windows can 'see' your entire codebase and, with good intentions, refactor something three directories away from where you pointed it. More power requires more deliberate constraint.

Regression Management at Scale

For teams working at scale, manual characterization test writing isn't sustainable. Here's where tooling matters.

Automated Regression Capture with AI-Assisted Testing

The irony is beautiful: use AI to write your characterization tests before you use AI to change your code. Ask your AI assistant to generate snapshot tests for every public interface in a module. Review them for accuracy. Commit. Then proceed. You've just turned a potential vulnerability into a safety net.

Regression Budgets

Some teams are adopting the concept of regression budgets a threshold of acceptable behavioral drift per sprint. If AI-assisted changes introduce more than X regression failures per sprint, the team pauses and audits. This makes drift visible on a product and management level, not just a technical one.

The goal isn't to be afraid of AI tools. The goal is to use them with the same discipline you'd apply to any powerful tool. You wouldn't let a junior developer push directly to main. Don't let your AI assistant do it either.

What This Means for Your Team Right Now

AI regression isn't a future problem. If your team is using AI coding assistants today without characterization tests and explicit module boundaries, you're accumulating what engineers are starting to call regression debt a cousin of technical debt that compounds faster and fails more catastrophically.

Start small. Pick the three most critical modules in your codebase authentication, billing, data pipeline, whatever keeps you up at night. Write characterization tests for them this week. Then let your AI loose on everything else.

The developers who master AI drift management won't just ship faster. They'll ship reliably. And in 2025, reliable is the new fast.

If you want to go deeper on securing your development pipeline from AI-introduced vulnerabilities, the security engineering resources at Axeploit are built exactly for this kind of challenge.