Applications pass all your automated tests successfully. Continuous integration pipelines show green across the board. Load balancers distribute traffic evenly. Deployments complete without issues. Users onboard smoothly. Key metrics climb steadily upward.

Then the probes begin hitting the live system. Logs rapidly fill with strange anomalies. Downtime inevitably follows shortly after. The puzzle becomes clear when you realize that tests covered all the expected paths through the application, but attackers deliberately walk the dangerous edges where vulnerabilities hide.

The Testing vs. Attack Pipeline

The app lifecycle splits clearly into development tests, staging environments, and production deployments. Unit tests mock controlled inputs to verify individual functions. Integration tests hit real APIs to validate component interactions. End-to-end scripts simulate complete user journeys through clicks and form submissions.

Attackers focus relentlessly on fuzzing the boundaries where inputs meet code.

Why Developer Tests Blind to Attacks

Tests mirror user stories and expected behaviors precisely. For example, "Submit order succeeds" tests use clean, valid JSON payloads that represent legitimate customer behavior. Attackers send deliberately malformed JSON like {"price":-999,"items":null} that bypasses validation entirely. Tests pass with flying colors while real wallets drain in production.

Coverage metrics lie systematically about true security. Teams celebrate 90% line coverage achieved, but edge parameters remain completely skipped. Does SQL use bound parameters? Tests verify with one safe value only. Attackers craft sophisticated UNION SELECT payloads that extract data undiscovered.

Auth flows receive minimal scrutiny beyond basics. Login successfully grants a token with valid credentials during testing. Tests completely skip expired tokens, replayed sessions, and forged signatures that attackers weaponize.

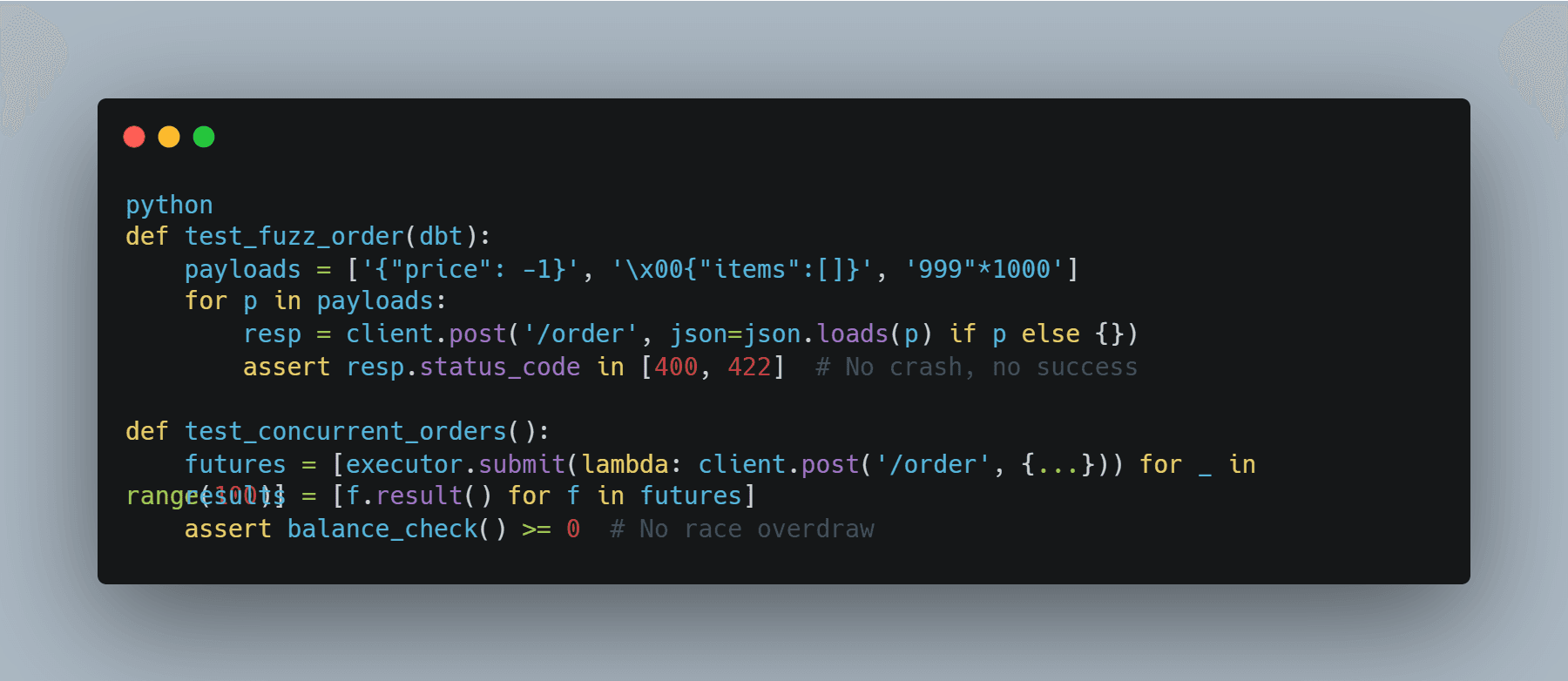

Concurrency proves extremely rare in test suites by design. Tests run single-threaded sequentially. Attackers exploit time-of-check-time-of-use (TOCTOU) races by checking balance first, then charging double before the check completes.

A real failure example illustrates the disconnect perfectly. An e-commerce API passed tests for POST /order with valid cart contents. Production faced 1000 concurrent empty orders simultaneously. Mutex protection was absent entirely, allowing massive overdrawing of accounts.

Attack Vectors That “tests” Routinely Miss

Fuzzing gaps persist everywhere because inputs get normalized during tests. Attacker payloads use polyglots like %00../etc/passwd that defeat multiple sanitization layers simultaneously.

Timing attacks remain invisible because tests run synchronously. Side-channel leaks emerge through measurable sleep time differences that reveal internal state.

Headers receive minimal testing attention. Tests use basic headers only. Attackers spoof X-Forwarded-For addresses and bypass rate limits through header manipulation.

File uploads get tested with legitimate PNG files. Attackers upload PHP shells renamed with .jpg extensions that execute when processed.

GraphQL endpoints lack depth limits by default. Attackers trigger billion-dollar introspection queries that dump entire schemas instantly.

An outage case demonstrates the consequences clearly. A SaaS dashboard passed tests for queries where users=me returned personal data only. Attackers used GraphQL aliases to batch users=* and dumped the entire customer database.

Bridging with Attacker-Style Testing

Shift test suites to think adversarially by integrating chaos engineering principles throughout. Make testing mimic real threats systematically.

Fuzz Inputs: Avoid basic random fuzzing. Use sophisticated tools like AFL++ or go-fuzz targeted specifically at endpoints. Crash immediately on invalid UTF-8 sequences and other parser breakers.

Chaos Engineering: Deploy Gremlin to inject artificial latency and kill pods randomly. Verify failover mechanisms activate correctly under stress.

Auth Hammer: Use Burp Suite to replay captured tokens repeatedly. Verify revocation mechanisms trigger appropriately.

Resource Probes: Test with Slowloris header attacks and Memcached amplification bombs to measure true resource exhaustion resilience.

Example hardened test for order API:

CI mandates: Require pytest combined with hypothesis property-based fuzzing. Fail pull requests immediately on any successful escapes.

Runtime: Deploy canary releases serving 1% of traffic to new code versions. Monitor error spikes and anomaly patterns immediately.

Tools stack: Run OWASP ZAP automated scans against staging environments. Execute Nuclei templates scanning for known CVEs continuously.

Production Defenses Beyond Tests

Tests gate code quality effectively. Runtime controls stop real attacks.

WAF: Deploy ModSecurity rules to block SQL injection and XSS before traffic reaches your app.

API Gateway: Configure Kong with strict quotas (e.g., 100 requests/min per API key).

RLS: Enforce PostgreSQL row-level security to filter data at the database layer—not in application code.

Secrets: Remove hardcoded credentials entirely. Use HashiCorp Vault with enforced 1-hour TTL rotation.

Example: /order Endpoint

- Pre-WAF: Validate all incoming JSON schemas

- Post-auth: Apply RLS policies on

cartsaccess - Transaction: Use

SERIALIZABLEisolation to prevent race conditions - Post-transaction: Log every action with full audit context

Scale & Resilience

- Run load tests with k6 simulating 10k concurrent users

- Alert when p95 latency exceeds 500ms

Cost Reality

Fuzzing adds ~2 minutes to build time.

Breaches cost orders of magnitude more financially and reputationally.

Reframed: Test the Edges, Not the Center

Applications survive when tests simulate malicious intent.

Cover:

- malformed inputs

- concurrency races

- resource starvation

Developers build smooth paths.

Attackers search for cracks.

Secure systems assume failure paths first and defend them by default.

Final Note

Use https://axeploit.com to:

- Automate edge-case testing across critical endpoints

- Continuously fuzz inputs to uncover hidden vulnerabilities

- Simulate real-world exploit scenarios against your APIs and services

- Detect regressions in security posture with every deployment

You can also:

- Schedule weekly automated tests to ensure ongoing protection

- Run scans on every build or release candidate

- Get actionable reports highlighting exploitable weaknesses

- Integrate directly into CI/CD pipelines for zero-friction security

Outcome: Security testing shifts from a one-time effort to a continuous, automated defense layer.