For the last few years, "AI in the editor" meant autocomplete and chat. Useful, but still a side panel. The shift happening now is different: your editor is becoming the place where an agent plans work, edits the repo, calls external tools, and hands you something you can actually ship. That is closer to an operating system than to a spell checker.

Cursor is the shell. MCP (Model Context Protocol) is how that shell talks to databases, docs, browsers, and security tools without a custom integration for every vendor. Together they describe what I call an AI operating system for software work: one loop from intent to artifact, with clear places for you to steer.

Logic: Why This Feels Like an OS

A traditional OS schedules processes, manages files, and exposes a stable way for programs to use hardware. A dev "AI OS" does the same job at a different layer.

- Processes: Agent turns, tool calls, and background tasks (tests, linters, scans).

- Files: Your repo, configs, and generated assets stay the source of truth.

- Stable interface: MCP standardizes how models request actions from the outside world.

Without that interface, every tool needs a bespoke plugin. With MCP, the model learns one pattern: discover a server, read its tool list, call a tool with JSON. Your job stops being "glue thirty integrations" and becomes "pick the right servers and write clear rules."

Explicitness: What Cursor Actually Owns

Cursor already indexes your codebase, applies project rules, and runs multi-file edits. Where it levels up is full-cycle work inside the repo: read context, propose a plan, implement, run commands, fix failures, repeat.

That only works if three things stay explicit:

- Scope. What is in bounds for this session (one feature, one bug, one migration)?

- Authority. What the agent may run without asking (formatters) versus what needs a human yes (deletes, production deploys, billing changes).

- Evidence. Tests, type errors, and security checks are not vibes. They are signals the loop must react to.

MCP matters here because it moves "evidence gathering" out of copy-paste hell. Your agent can query your CMS, hit an internal API, or trigger a scan, then fold the result back into the next edit. The loop gets shorter every time you remove a manual hop.

Memorability: MCP in One Picture

Think of MCP as USB-C for AI tools.

Before USB-C, you carried a bag of adapters. Before MCP, every serious workflow needed custom bridges: one script for docs, another for ticketing, another for your scanner. MCP says: expose capabilities as named tools with schemas, authenticate once, and let any compatible client use them.

For a vibe coder, the payoff is simple. You stay in Cursor. The agent pulls structured data from the systems you already use. Less context switching means fewer mistakes and faster shipping.

It also means your security posture can sit in the same loop. If your testing tool exposes an MCP server, verifying a change is not a separate afternoon. It is another tool call in the chain, right next to npm test.

Actionability: Running a Full-Cycle Session

Here is a practical pattern that works today:

- Brief the agent in the repo. Point it at the ticket, the failing test, or the user story. Let it restate the goal in its own words so you catch misunderstandings early.

- Plan before code. A short outline in a doc or comment thread beats a thirty-file diff you did not expect.

- Implement with tight feedback. Run unit tests and linters on a short leash. Fix red before green spreads lies through the codebase.

- Use MCP for reality checks. Schema from your CMS, response from a staging API, row counts from a database, or an external scan. Pick what proves your change is real.

- Human gate for irreversible moves. Merges to main, infra changes, and anything that touches user data get a final human read. Autonomy is not abdication.

That fifth step is where teams either win or leak data. Full-cycle autonomy is powerful precisely because it is fast. Fast without verification is how SQL injection and broken access control slip into AI-assisted code. The OWASP-style issues show up in real studies of AI-generated apps. Your OS needs a verification channel, not only a generation channel.

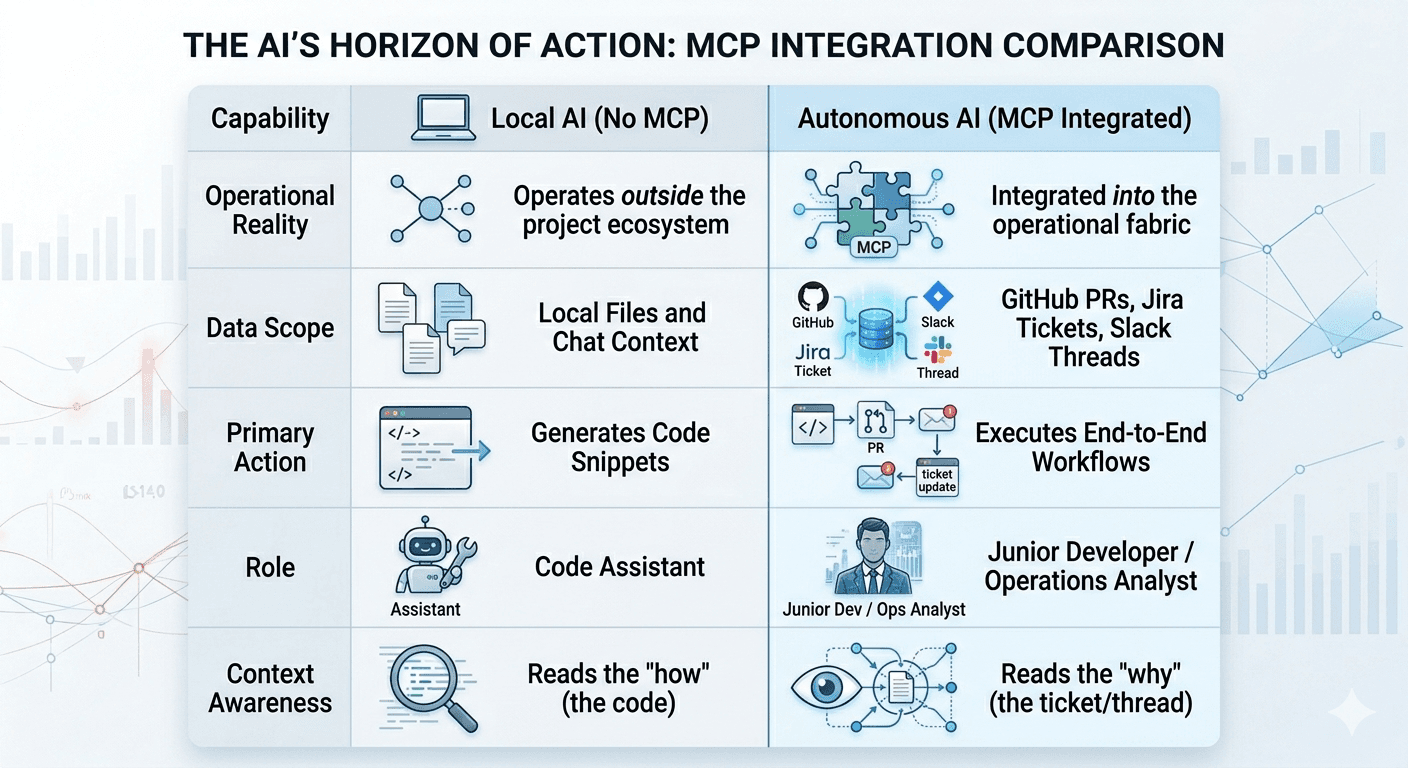

Comparison: The AI’s Horizon of Action

The Part Most Builders Skip

An AI OS does not remove responsibility. It compresses time. Compression rewards good defaults: branch protection, secrets scanning, dependency checks, and application testing that hits your real auth flows and user paths.

If you ship with Cursor and MCP, you are not "done" when the agent says so. You are done when evidence says so: tests, reviews, and checks that match how your app is actually attacked.

That is why we built Axeploit the way we did. You give a URL. AI agents exercise your app like attackers would: sign-up flows, authenticated pages, thousands of checks. No config wall, no security PhD required. It is the external proof that pairs well with an internal AI loop. One-time audits start at $99 if you want a clear baseline before your next release.

Closing the Loop

Cursor gives you the shell. MCP wires the world into that shell. Full-cycle autonomy means you treat the combination like an operating system: explicit permissions, short feedback loops, and tool calls that pull truth from outside the model.

Master that, and you are not just coding with AI. You are running a repeatable system that builds, integrates, and verifies. Keep the human gate on the moves that matter, and ship fast without shipping blind.

Before your next release, get external proof on what you shipped: https://panel.axeploit.com/signup