The Context Window Problem That Defined a Generation of AI Tooling

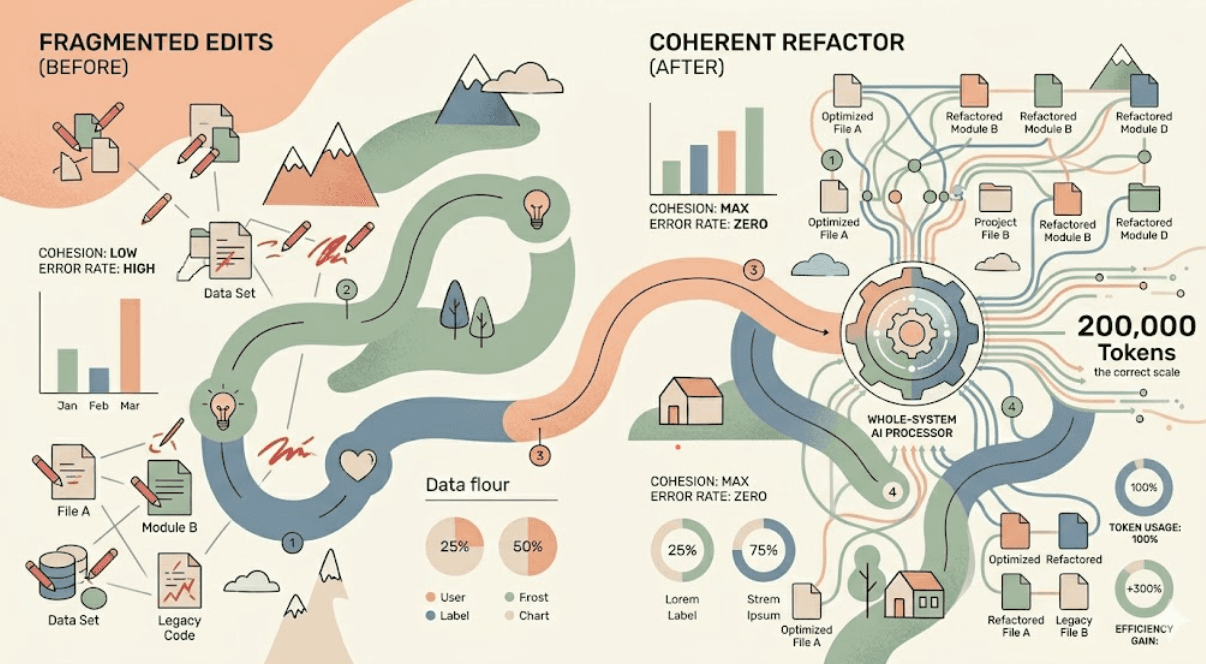

For the first wave of AI coding assistants, context windows were a hard ceiling that shaped everything. With 4K or 8K token limits, you could show the AI a function. Maybe a file. But a system? An architecture? Impossible. The AI could help you write code, but it couldn't understand your code not in the holistic sense that experienced engineers mean when they say they 'understand a system.'

This limitation created an entire genre of workarounds: chunking strategies, retrieval-augmented generation pipelines, summarization layers. Engineers became experts in figuring out how to squeeze the most relevant context into the smallest window. It worked, mostly. But something was always lost in the compression.

What 200K Tokens Actually Means

When Claude 3.5 Sonnet and similar models expanded their context windows to 200,000 tokens, the change wasn't incremental. It was categorical. Two hundred thousand tokens is approximately 150,000 words. That's a large novel. More practically for engineers: it's your entire Node.js backend, your React frontend, your infrastructure-as-code config, your test suite, and your documentation loaded simultaneously into a single working session.

You're not showing the AI a piece of your system anymore. You're showing it the system. That changes what questions you can ask and what answers you can trust.

The implications for refactoring are profound. For the first time, you can ask an AI to understand a dependency graph that spans dozens of files, trace a data flow through an entire pipeline from database to UI, or identify all the places where a given assumption is encoded across your codebase. And have it actually be right.

Types of Refactors Now Possible

1. Cross-Cutting Concern Migration

Before large context windows, migrating a cross-cutting concern like switching from REST to GraphQL, or changing your authentication strategy, or upgrading your logging library required human engineers who could hold the full system in their head while working. The AI could assist file by file, but it couldn't see the full picture.

With 200K tokens, you can now load your entire codebase, explain the migration goal, and get a comprehensive change plan that accounts for every caller, every interface, every edge case it can see. Engineers at several organizations we've spoken to have used this approach to migrate authentication systems in a single focused session that previously would have taken a team two sprints.

2. Architectural Style Enforcement

One of the most expensive ongoing costs in large codebases is architectural drift places where different teams or different eras of the codebase handled similar problems differently. With large context windows, you can ask an AI to do a full-system audit of architectural inconsistency, categorize it, and propose a normalization plan. This used to require a dedicated architecture review that could take weeks.

3. Dependency Graph Analysis and Dead Code Removal

Dead code is expensive. It slows down onboarding, confuses AI assistants making changes, and hides real logic in noise. With a large enough context, AI can do a whole-system dead code analysis and propose deletions with confidence about what's actually unreachable. This is particularly powerful in JavaScript and TypeScript codebases where dynamic imports can make static analysis difficult.

Workflow Design for Large-Context Refactoring

Having the power of a 200K context window doesn't mean throwing your entire codebase at an AI and hoping for the best. The teams doing this effectively have developed specific workflow patterns.

The Staged Loading Strategy

Load your codebase in layers. Start with the most critical files your core business logic, your primary interfaces, your database models. Ask the AI to build a mental map before you give it instructions to change anything. Then incrementally add the supporting files. This staged approach prevents the AI from making changes based on incomplete picture.

Explicit Constraint Setting

The bigger the context, the more important explicit constraints become. Before any large-context refactor, state clearly what must not change: APIs your clients depend on, behavioral contracts encoded in tests, security boundaries. Give the AI an explicit do-not-touch list and a rationale for each item.

Incremental Commits with Rollback Points

Even with a perfect 200K-token refactor plan, execute it incrementally. Commit after each logical unit of change. Run your characterization tests (see Blog 1 for why this matters). Only proceed when the previous unit is verified. The AI's plan may be excellent. Reality has a way of surfacing edge cases that weren't in the context.

The Limits of Context Windows

It's worth being clear about what even 200K tokens cannot do. It cannot give the AI institutional knowledge the human stories, the business decisions, the war stories that explain why the code is the way it is. It cannot replace the judgment of engineers who know where the bodies are buried. And it cannot guarantee that a large-scale AI-generated refactor is semantically correct, even if it's syntactically valid.

The context window expansion is a force multiplier for capable engineers. It is not a replacement for engineering judgment. The teams who misunderstand this distinction will generate impressively large PRs with subtly wrong behavior and spend more time reviewing them than they saved building them.

Think of the 200K context window as giving the AI the same information a new senior engineer would get in their first week of deep onboarding. Helpful, necessary, but still not a substitute for experience.

Looking Forward

Context windows will continue to expand. One million tokens is already available in some preview contexts. The question isn't whether AI will be able to understand increasingly large systems it's whether engineering teams will develop the discipline and workflow patterns to use that capability safely.

At Axeploit, we're watching this space closely because large-context AI refactoring creates new surface areas for security and performance regressions that traditional review processes aren't designed to catch. The answer isn't slower adoption. It's smarter process design.

The engineers who master large-context refactoring workflow over the next twelve months will have a productivity advantage that compounds. This is not a tool to sleep on.