AI dramatically lowers the entry barrier to app development. Anyone can now simply prompt a large language model and receive a complete full-stack application as output. Tools like Cursor iterate through bugs automatically. Vercel handles one-click deployments seamlessly. Even non-developers can now ship fully functional SaaS products. Traditional barriers to entry crumble completely. Development velocity soars to unprecedented levels. Meanwhile, security risk compounds silently in the background without anyone noticing.

Building applications has become completely democratized and accessible to everyone. However, the risks have become centralized and amplified in dangerous ways. Flawed applications now multiply at scale across the internet. Each one becomes a potential probe vector for attackers scanning for weaknesses. AI crafts convincing application facades incredibly fast with polished interfaces and functionality. Security demands deep scaffolding and infrastructure that takes time and expertise to build properly. Velocity without rigorous security practices leads directly to breaches.

The AI-Accelerated Build Pipeline

Before AI transformed development, writing code took significant time and effort. Review gates slowed deployments deliberately. Automated tests enforced quality standards rigorously. After AI, the entire process shrinks from prompt to production in mere hours. GitHub Copilot fills in complete API routes with minimal input. Replit agents scaffold entire database schemas automatically.

Risk now scales cubically with adoption, multiplying across users times features times inherent flaws in generated code.

AI completely owns the synthesis phase marked in green on the flowchart, effortlessly producing plausible full-stack combinations like Flask backends paired with React frontends that look production-ready at first glance. However, the red surfaces explode across the flowchart in subsequent phases, revealing critical vulnerabilities like default credentials left unchanged, CORS policies configured wide open to any origin, and database queries executed in raw unsanitized form.

How AI Amplifies Latent Risks

AI models train primarily on public repositories where speed consistently trumps safety as the top priority. This training data bias carries directly into output patterns, including inline secrets embedded casually in demo code and permissive ORM configurations that default to overly trusting inputs.

Prompt vagueness propagates dangerous gaps systematically. A simple instruction like "Add login functionality" yields session management without CSRF protection tokens. Attackers immediately exploit these missing tokens to perform unauthorized transfers.

Rapid iteration hides deeper issues effectively. Chat interfaces refine UI components iteratively based on feedback. However, they completely skip critical schema indexes needed for production performance, causing slowdowns and potential data leaks under real load.

Volume creates systemic risk at ecosystem scale. When 1000 indie hackers prompt AI models daily for new applications, each resulting app becomes a port 3000 listener exposed to internet scanners hungry for easy targets.

Case study: An AI-generated forum application from the prompt "user posts functionality" outputs SQLite databases with any-write permissions enabled by default. Guest users escalate privileges instantly through simple ID swapping attacks.

No review barrier exists anymore. Pre-AI pull requests showed clear diffs that flagged vulnerabilities during code review. AI-generated code merges directly into main branches without scrutiny.

Emergent Risks in AI Builds

Configuration drift emerges silently as AI tweaks environment variables automatically. AWS S3 buckets accidentally configured as public become low-hanging fruit. Automated S3 scanners harvest exposed data continuously.

Supply chain risks multiply as Copilot suggests unvetted npm packages. The left-pad vulnerability returns in 2026 edition, breaking thousands of AI-generated apps simultaneously.

Hallucinated dependencies create chaos when AI suggests "Use latest auth library" but pins vulnerable forks or abandoned repositories instead of stable versions.

Multi-tenant isolation blindness proves particularly dangerous. AI-generated apps share databases without proper isolation controls. Escape vulnerabilities allow reading sibling tenant data across boundaries.

Breach vector example: A newsletter tool built with AI fails to escape email content properly. Malicious subscriber XSS payloads steal credentials from administrators viewing reports.

Risk Gates for AI Velocity

Preserve AI development speed completely while inserting orthogonal security checks that run in parallel.

Synth Scan: Generate code first, then immediately scan with trivy vulnerability scanner plus semgrep security linter. Block any SQL injection patterns detected before proceeding.

Policy CI: Run Checkov against all infrastructure code to validate configurations. Reject deployments containing public S3 buckets or other misconfigurations.

Runtime Proxy: Deploy API gateway wrappers around all endpoints. Enforce authorization checks universally regardless of application code quality.

Chaos Validate: Deploy canary releases to production. Hammer new endpoints with ffuf fuzzing tools to validate resilience before full rollout.

Hardened prompt-to-prod pipeline for CRM application:

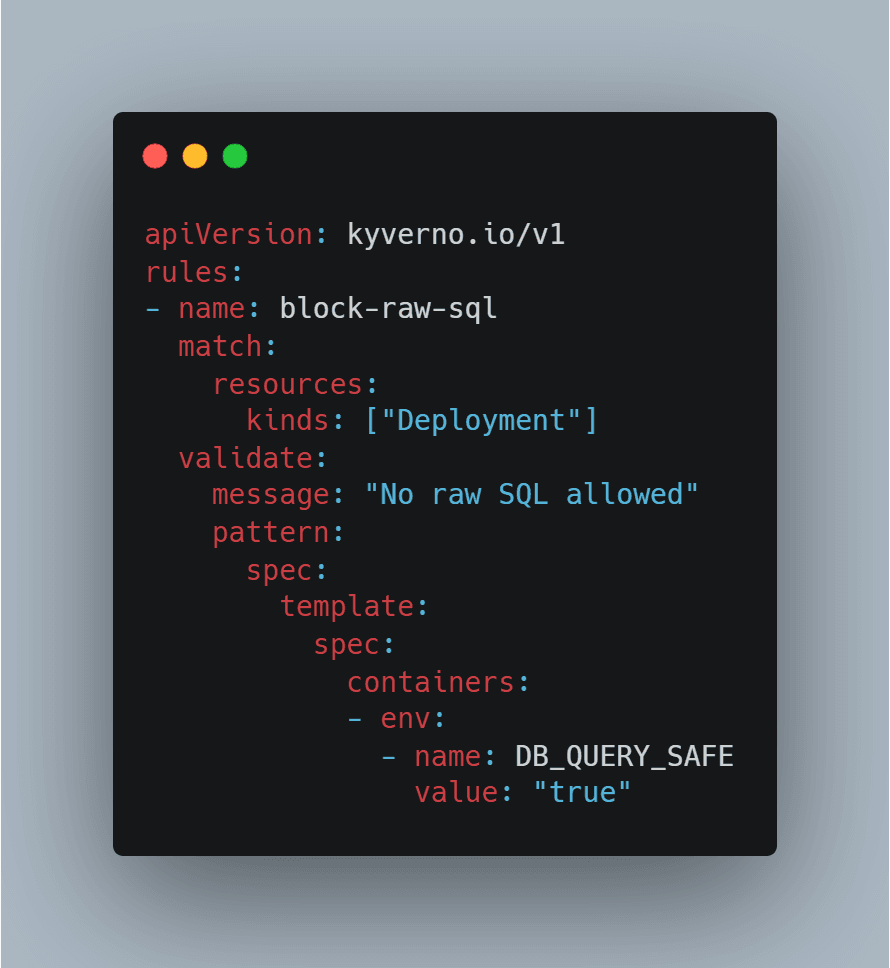

Prompt -> AI Code -> Bandit (A severity block) -> IaC tfsec scan -> Deploy via ArgoCD with Kyverno policies

Example gate for /users endpoint:

Audit: Log all prompts plus generated code diffs comprehensively. Correlate security incidents back to specific AI generations for continuous improvement.

Scaling Safe AI Builds

Indie developers now ship weekly with AI acceleration. Enterprise teams deploy daily. Security gates parallelize completely to avoid slowing velocity.

Tooling: Configure GitHub Actions with AI-aware pre-commit hooks. Fail silently on common vulnerabilities before developers even notice.

Community: Share secure prompt templates across the ecosystem. Always include "row-level security plus prepared statements" in generation instructions.

Hybrid model: Let AI handle core feature implementation while humans review only critical configurations and infrastructure.

Metrics: Measure dwell time of vulnerabilities to under 24 hours deployed. Focus less on zero-day counts, more on detection speed.

Cost: Security gates consume only 5% of total cycle time. Complete risk wipeout across portfolio more than pays for investment.

Case study: Design studio uses AI for 50 microservices. Pre-deploy gates catch 80% of issues automatically. Runtime blocks handle remaining 20% seamlessly.

Reframed: Velocity Meets Vigilance

AI completely erases traditional build friction and complexity. Risk demands that friction gets restored strategically through systematic gates. Layer comprehensive checks immediately post-synthesis but pre-runtime execution. Prompt AI models freely to maximize creativity and speed. Secure outputs ruthlessly through automation and policy. Enduring applications successfully balance both velocity and vigilance in equal measure.