In the SolarWinds SUNBURST campaign, the attackers maintained access to compromised networks for an average of nine months before detection. Their operational discipline was striking: they limited the number of systems they moved to, they operated during business hours in the victim's timezone, they used legitimate remote access tools rather than custom malware, and they matched the activity patterns of the IT administrators whose credentials they had compromised. From the perspective of a SOC analyst reviewing individual alerts, nothing looked anomalous. Every action was something a legitimate admin might do. The anomaly was visible only in the aggregate , in the pattern of which systems were accessed, in what order, over what timeframe, and with what implicit objective.

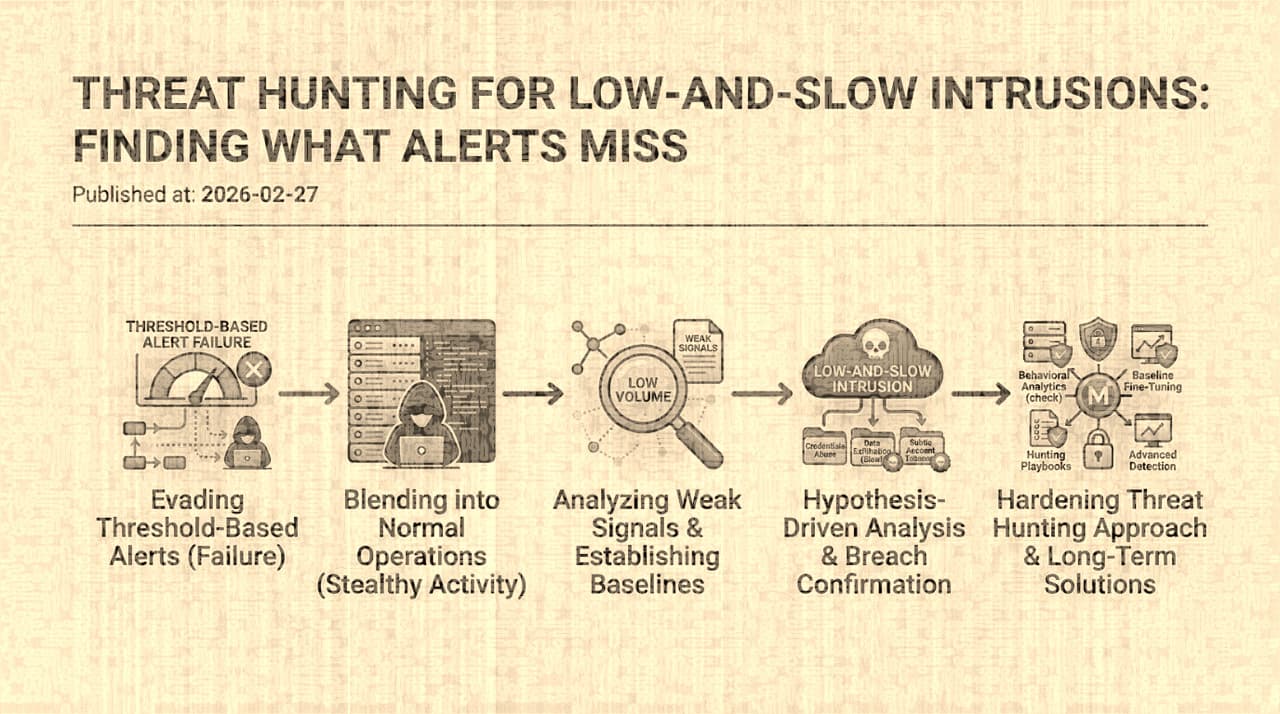

This is the defining characteristic of low-and-slow intrusion campaigns: they are not invisible. The telemetry exists. The events are logged. But each individual event is below every detection threshold, and the pattern that connects them into an intrusion narrative is visible only to an analyst who is actively looking for it with the right hypothesis and the right time window.

Why Alert-Driven Detection Fails Against Patient Adversaries

Modern SOCs are built around a detection pipeline: logs flow into a SIEM, detection rules evaluate events against patterns, alerts are generated when patterns match, analysts triage alerts, and confirmed incidents are escalated. This pipeline is effective against threats that produce distinctive signals , known malware signatures, brute-force login attempts, vulnerability exploitation, data exfiltration at volume.

It fails against threats designed to stay below the detection surface for three structural reasons:

Detection rules encode known-bad patterns. A rule that fires on "more than 50 failed logins in 5 minutes" catches brute-force attacks. It does not catch an attacker who tries one credential per account across 50 accounts, each from a different IP, at a rate that looks like normal failed logins. The attacker reads the rule's logic (which is often discoverable through vendor documentation or common configuration) and stays below the parameters. The rule works perfectly , for the threat it was designed to detect.

Individual events lack context. A single AssumeRole event in AWS CloudTrail is normal. A single PowerShell execution on a Windows endpoint is normal. A single file copy operation is normal. The SOC analyst triaging an alert for one of these events has no reason to escalate it. But an AssumeRole followed by IAM enumeration followed by S3 bucket listing followed by staged file copy , that is a reconnaissance and exfiltration sequence. The sequence is meaningful; the individual events are not. And most detection pipelines evaluate events individually.

Retention windows are too short. Low-and-slow campaigns unfold over weeks or months. If your SIEM retains detailed logs for 30 days, an attacker who spaces their activities across 45 days ensures that the earliest events in the sequence have aged out of your searchable data by the time the latest events occur. You literally cannot reconstruct the full sequence because the beginning of the story has been deleted. This is not an accident , sophisticated attackers are aware of typical retention periods and pace their operations accordingly.

Threat Hunting as a Different Analytical Paradigm

Threat hunting inverts the alert-driven model. Instead of waiting for a detection rule to fire and then investigating, the hunter starts with a hypothesis about adversary behavior and proactively searches the data for evidence that supports or refutes it.

A good hunting hypothesis is specific, testable, and tied to an adversary objective:

-

"An attacker who has compromised a developer laptop would use the developer's cloud credentials to enumerate resources outside the developer's normal access pattern. I should look for cloud API calls from developer-role identities that access resources the developer has never previously accessed."

-

"An attacker establishing persistence in our Active Directory would create or modify service accounts or scheduled tasks. I should look for new service principal names, new scheduled tasks on domain controllers, or password resets on service accounts that were not requested through our ticketing system."

-

"An attacker exfiltrating data from our environment would stage files in a shared location before transfer. I should look for unusual file copy patterns to network shares or cloud storage buckets, especially from identities that do not normally perform bulk data operations."

Each hypothesis maps to specific telemetry sources, specific query patterns, and specific indicators that would confirm or deny the hypothesis. The hunt is a structured investigation, not a browse through dashboards.

The Telemetry That Matters

Not all logs are equally useful for hunting. The telemetry that distinguishes a slow intrusion from normal operations is the telemetry that captures identity-level behavior over time , who did what, to which resources, in what sequence, from what context.

Identity and authentication logs. These are the most valuable hunting telemetry because they reveal the "who" dimension. Authentication events (successful and failed), MFA events, session creation, privilege escalation, role assumption, and impersonation events. The hunting question is: "Is this identity's behavior consistent with its historical baseline?" A developer account that suddenly begins accessing production infrastructure, or a service account that starts making interactive API calls, or a recently-created account that immediately accesses sensitive resources , these are identity-level anomalies that individual events do not reveal.

Cloud control-plane logs. CloudTrail (AWS), Cloud Audit Logs (GCP), and Activity Logs (Azure) capture every API call to cloud management services. For hunting, the value is in the sequence: GetCallerIdentity → ListRoles → AssumeRole → ListBuckets → GetObject is a reconnaissance-and-exfiltration playbook. Each call is normal in isolation. The sequence, from a recently-compromised identity, is an intrusion.

Endpoint Detection and Response (EDR) telemetry. Process creation, file operations, network connections, registry changes, and command-line arguments provide the data for hunting living-off-the-land techniques , where attackers use legitimate system tools (PowerShell, WMI, certutil, BITSAdmin) for malicious purposes. The hunting value is in identifying unusual use patterns of normal tools: PowerShell with encoded commands, certutil downloading files, schtasks creating scheduled tasks from unusual parent processes.

DNS logs. DNS query logs reveal external communication patterns that can indicate command-and-control channels, data exfiltration via DNS tunneling, or domain generation algorithm (DGA) activity. A host making DNS queries to newly-registered domains, or to domains with high entropy in the subdomain, or at unusual times, is producing signals that are invisible in other telemetry sources.

Role-Based Behavioral Baselines

The single highest-ROI analytical technique for hunting is role-based behavioral baselining. The observation is that "normal" behavior varies dramatically by role: what is normal for a production SRE (accessing infrastructure management tools at 3 AM during an incident) is deeply abnormal for a finance analyst. A global baseline that models "average user behavior" generates so many false positives that it is operationally useless. A role-based baseline that models "what does a finance analyst typically access, when, from where, and how?" produces much stronger anomaly signals.

Building these baselines requires historical data (at least 30-90 days of behavioral history per role), a mapping of users to roles (which organizational structures and HR systems provide), and analytical capability to identify deviations (statistical anomaly detection, peer-group analysis, or simpler threshold-based approaches applied per role rather than globally).

The payoff is that when an attacker compromises a credential, their behavior almost never matches the compromised user's historical pattern. The attacker does not know the user's typical access patterns, typical working hours, typical IP addresses, or typical tools. Even a careful attacker who attempts to mimic normal behavior will diverge in ways that a good baseline can detect.

Closing the Feedback Loop

The most important , and most frequently neglected , element of a threat hunting program is the feedback loop. Every hunt, whether it finds an intrusion or not, produces information that should improve future capabilities:

-

Confirmed intrusions should generate new detection rules (so that the specific attack pattern is caught automatically next time), new hunt hypotheses (derived from the attacker's TTP patterns), and updated baselines (reflecting the organization's current exposure).

-

Disproven hypotheses should be documented so that the same hunt is not repeated without new information. They may also reveal telemetry gaps: "I could not validate this hypothesis because we do not log X" is a finding in itself that should inform the telemetry collection strategy.

-

Near-misses and ambiguous signals should feed into standing hunt topics that are revisited periodically with fresh data.

Organizations where hunting is a periodic exercise disconnected from detection engineering get diminishing returns. Organizations where hunting findings flow directly into detection rules, baseline updates, and telemetry improvements get a compounding effect: each hunt makes the next one more effective, and the automated detection layer gradually expands to cover patterns that previously required manual hunting.

The honest assessment is that threat hunting is expensive. It requires skilled analysts, extensive telemetry, long retention windows, and analytical tooling. It produces results that are difficult to quantify , you cannot easily measure the value of a breach that did not happen because a hunt detected the intrusion early. But the alternative , relying entirely on threshold-based detection against an adversary who has read the same detection engineering playbook you have , is to accept that patient, well-resourced attackers will operate in your environment for months without detection. For most organizations, that is a risk they cannot afford.