You opened Cursor, told the AI to build you a Stripe-integrated SaaS app, and twenty minutes later you had something that worked. You pushed it to GitHub. You deployed. You celebrated.

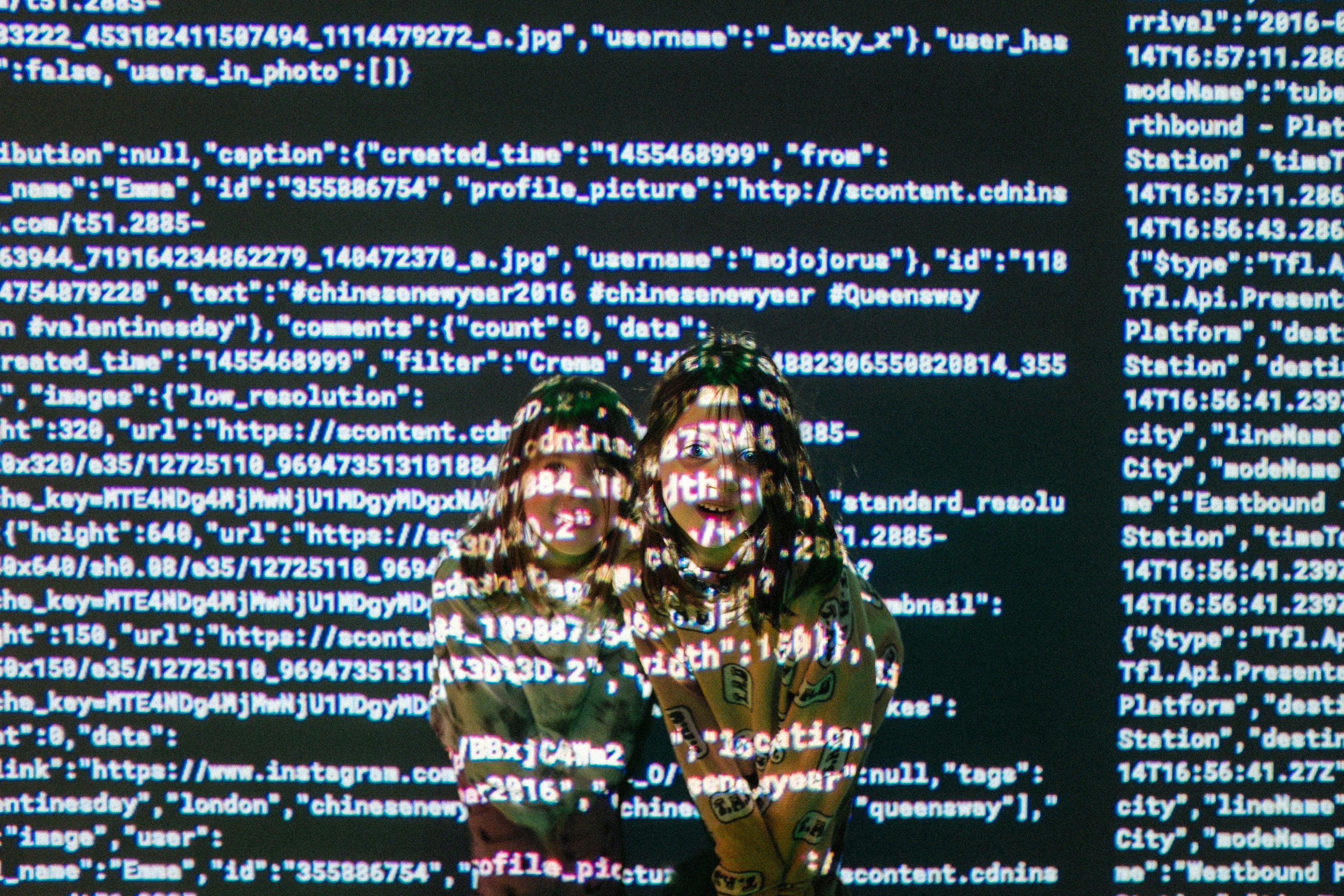

What you didn't notice is that your Stripe secret key, your database URL, and your AWS credentials went along for the ride. They're sitting in a public repo right now. And someone is already looking for them. Vibe coding security starts with understanding this one fact: your speed is the attacker's advantage.

This isn't hypothetical. According to GitGuardian's 2026 State of Secrets report, 28.65 million new secrets were leaked in public GitHub commits in 2025 alone. That's a 34% jump from the year before, and the largest single-year increase on record. AI-service secrets specifically surged 81%, driven by the explosion of vibe-coded apps hitting GitHub every day.

Attackers don't need to break into your server. They just search GitHub.

How Attackers Hunt for Your Secrets

Here is something most vibe coders don't realize: GitHub has a search bar, and attackers use it constantly.

Try this yourself. Go to GitHub's code search and type filename:.env DB_PASSWORD. You'll find thousands of results. Real database passwords, sitting in public repositories, pushed there by people who didn't know any better.

That's the manual version. The automated version is worse.

Attackers run bots that monitor every new public commit on GitHub in real time. When you push code, these bots parse it within minutes. They look for patterns they recognize: strings starting with AKIA (that's an AWS access key prefix), anything resembling a Stripe key starting with sk_live_, or database connection strings with credentials baked in.

When a bot finds a valid AWS key, the attacker spins up hundreds of EC2 instances to mine cryptocurrency. The key owner wakes up to a $50,000 bill from Amazon. This happens regularly.

The scary part? 70% of secrets that leaked in 2022 are still valid today. Most developers never go back and rotate them. The key you accidentally pushed six months ago is probably still working, still exposed, and still being indexed by these bots.

Why GitHub's Built-In Scanning Isn't Enough

GitHub knows this is a problem. In early 2026, they added 37 new secret detectors covering providers like Vercel, Snowflake, Supabase, and Shopify. Push protection now blocks 39 different token types before they enter your repo.

That's genuinely useful. But it has gaps.

GitHub's scanner skips pushes larger than 50MB. It can't detect paired secrets if they live in different files. It won't block a push that contains more than 1,000 existing secrets. And if you're running private repos on a free plan, you get limited coverage at best.

For a vibe coder who just shipped a project and moved on to the next one, these gaps are exactly where secrets fall through.

Three Layers of Protection Every Vibe Coder Needs

Fixing this doesn't require a security degree. It takes three layers, and you can set up the first two in about ten minutes.

Layer 1: Stop secrets before they enter git.

Add a .gitignore file to every project. Make sure .env, .env.local, and any file containing credentials is listed in it. This is the single most important thing you can do, and it takes thirty seconds.

Then install Gitleaks as a pre-commit hook. It checks your code against 150+ patterns for known secret formats before anything touches your repository. It runs in milliseconds. If it catches something, the commit gets blocked.

Layer 2: Scan your pipeline for what slips through.

TruffleHog runs in your CI/CD pipeline and goes deeper. It detects 800+ secret types, scans your entire git history (not just the latest commit), and actually tests whether detected secrets are still active. That last part matters because it eliminates false positives from keys you already rotated.

Layer 3: Continuous automated auditing.

This is where most vibe coders stop. They set up a gitignore, maybe install a hook, and then forget about it until something breaks.

That gap between "I set it up once" and "my app is actually secure" is where real damage happens. This is why we built Axeploit. You submit your URL. No configuration, no security expertise required. A fleet of AI agents scans your application for exposed secrets alongside 7,500+ other vulnerabilities, automatically. It's the kind of audit that used to cost thousands and take weeks, now available as a one-time scan for $99 or continuous monitoring on a monthly plan.

What to Do If You Already Leaked a Secret

If you've already pushed secrets to GitHub, here's your emergency checklist:

- Rotate the key immediately. Go to your provider's dashboard (AWS, Stripe, whatever it is) and revoke the old key. Generate a new one.

- Remember: the secret is in your git history. Deleting the file in a new commit doesn't remove it from old commits. Use git filter-repo or BFG Repo Cleaner to scrub it from your entire history.

- Check for unauthorized usage. Look at your cloud provider's billing dashboard and API logs. If someone used your key, you'll see unfamiliar activity.

- Scan your entire app. One leaked secret usually means there are others. Run a full audit to find everything.

Your vibe-coded app might be brilliant. But if your keys are public, none of that matters. The fix starts with a scan.

Run your first Axeploit audit now: https://panel.axeploit.com/signup