If you are a startup founder or a CISO or a modern “vibe coder,” your daily operations are likely built around velocity and agility. You are shipping code quickly, managing remote teams across different time zones, and using generative AI to streamline everything from marketing copy to backend architecture.

But while you are leveraging artificial intelligence to build the future, cybercriminals are using the exact same technology to compromise it.

For years, founders and developers viewed cyber attacks strictly as a technical problem, hackers breaking through firewalls or exploiting outdated software. Today, the most devastating breaches bypass your technical perimeter entirely by targeting the human element. The days of poorly spelled phishing emails from a “prince” are over. Welcome to the era of AI social engineering, where attackers don't just steal your passwords; they steal your identity.

The Evolution of Business Email Compromise (BEC)

To understand this new threat, we have to look at the evolution of Business Email Compromise (BEC). Historically, a BEC attack was simple: a hacker would spoof the CEO's email address and message someone in the finance department, urgently requesting a wire transfer to a “vendor” or asking for a batch of digital gift cards.

While rudimentary, it worked well enough to cause billions of dollars in corporate losses. However, standard email filters and basic employee awareness training eventually caught up. People learned to check the sender's domain name and look for grammatical red flags.

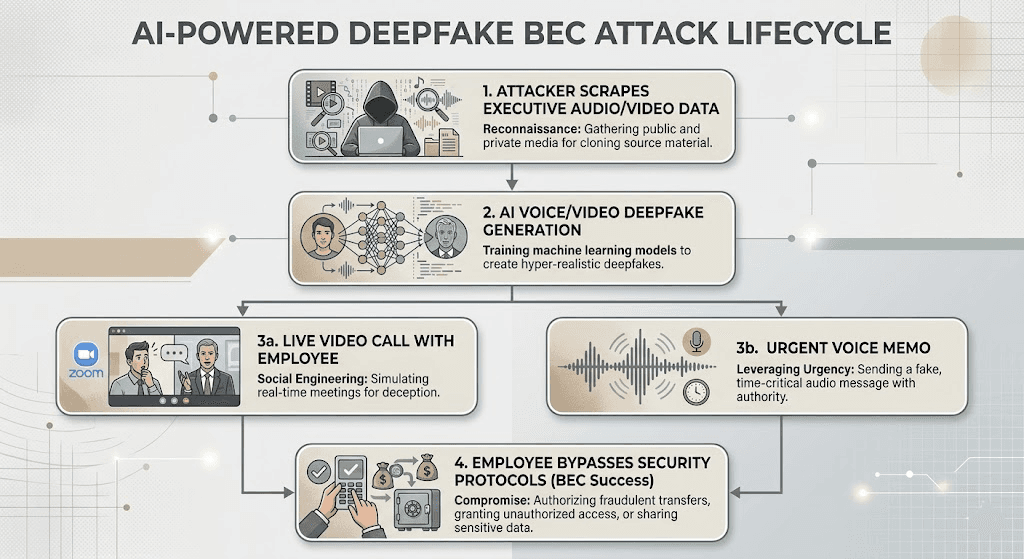

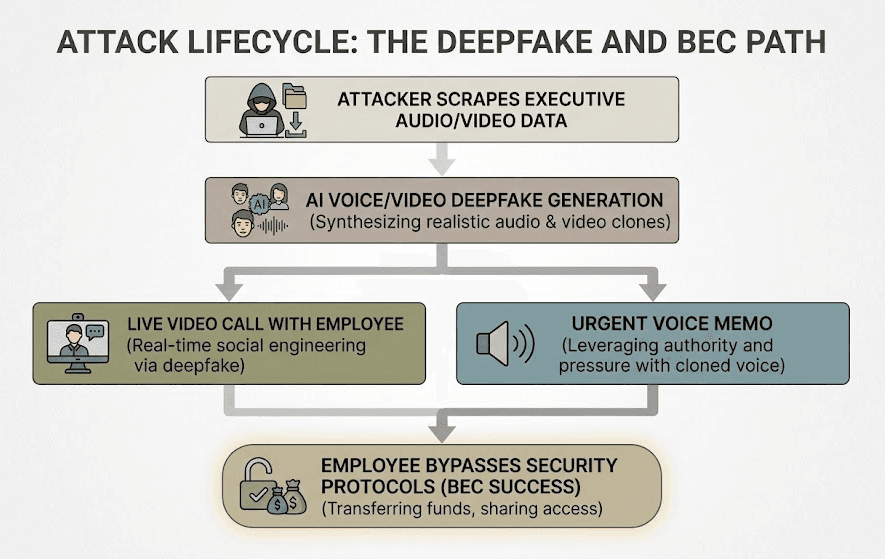

Hackers realized they needed to upgrade their tactics. Enter Deepfakes. By weaponizing machine learning models, attackers can now generate hyper-realistic audio and video of your executives, turning a simple email scam into a fully immersive psychological trap.

How Attackers Are Weaponizing AI

The technology required to pull off a sophisticated deepfake attack used to require Hollywood-level server farms. In 2026, it requires a laptop and a few dollars in cloud compute credits.

Voice Cloning in Seconds

You have likely done podcasts, spoken on public webinars, or posted videos to your company's social media. That audio footprint is all an attacker needs. Using modern voice cloning technology, hackers can take just three to five seconds of your voice and create a synthetic clone that perfectly mimics your tone, cadence, and accent.

They then use this clone to leave frantic voicemails for your lead developer or CFO: “Hey, it's me. I'm boarding a flight right now and my laptop is locked. I need you to urgently bypass the authorization protocol for the new vendor payment. Do it immediately before the market closes.” The urgency, combined with a voice that sounds exactly like the boss, triggers a panic response that overrides logical security protocols.

Real-Time Video Deepfakes

The threat doesn't stop at audio. Attackers are now deploying real-time video deepfakes in live Zoom or Google Meet calls. By intercepting a video feed and running it through an AI model, a hacker can physically appear as a company executive or a trusted vendor.

These aren't glitchy, low-resolution masks anymore. The deepfakes of today can blink, track facial expressions, and maintain lighting consistency. If an employee gets a Slack message from the “CEO” asking them to jump on a quick video huddle, and they see their boss's face and hear their voice authorizing a massive data transfer, they are going to execute the command.

Why Traditional Corporate Security Training Fails

For the past decade, corporate security training has relied on visual and textual cues: Check the URL. Look for spelling mistakes. Don't click suspicious links. This framework is completely useless against a deepfake. When an employee is looking directly at what appears to be their founder on a video call, and hearing their exact voice demanding action, cognitive dissonance takes over. Human biology is hardwired to trust our eyes and ears. You cannot train an employee to outsmart a perfect digital illusion using just a multiple-choice quiz.

Relying purely on human intuition to spot AI anomalies is a losing battle. The solution requires a shift from human-based detection to cryptographic and technical verification.

Defending the Boardroom: Technical Controls for the Deepfake Era

To protect your startup from AI-supercharged social engineering, you must implement systems that assume identity can be easily spoofed.

1. Cryptographic Identity Verification

Identity should never rely solely on a video feed or a voice on the phone. Modern teams must adopt internal cryptographic verification. If an executive requests a sensitive code merge, an infrastructure change, or a financial transfer, the request must be authenticated using hardware security keys (like YubiKeys) or strict mutual TLS certificates.

2. Out-of-Band Authentication

If a request comes in via a video call, verify it through a completely different, secure channel. For example, if the “CEO” asks for an urgent wire transfer on Zoom, the employee must be required to send a push notification to the CEO's registered mobile authenticator app. If the CEO doesn't physically approve the prompt on their authenticated device, the transaction is hard-blocked.

3. Implement Strict API Authorization (Zero Trust)

Social engineering is usually just the first step. The attacker uses the deepfake to trick an employee into using an internal application like an admin dashboard or a financial API to execute the actual theft.

This is where your DevSecOps pipeline becomes your last line of defense. If your internal APIs are built with a Zero Trust mindset, even a tricked employee shouldn't have the broad permissions required to instantly drain an account or export a massive database.

How Axeploit Fits into Your Security Strategy

You might be wondering: If this is a human problem, how does an automated security scanner help?

Because an attacker always needs your application to actually execute the payload. When a deepfaked executive tricks an employee into altering a database or authorizing a payment, that employee interacts with your software. If your internal applications are misconfigured, lack strict role-based access controls (RBAC), or contain logic flaws, the social engineering attack succeeds effortlessly.

This is where Axeploit becomes crucial. Our automated vulnerability scanner doesn't just look for outdated libraries; it actively tests your live, running applications. Axeploit safely attacks your APIs and internal dashboards from the outside, exactly how a malicious hacker would.

If a developer accidentally left a financial API endpoint unauthenticated, or if an internal tool allows privilege escalation, Axeploit’s dynamic scanner will catch it. We show you exactly how to lock down your business logic so that even if an employee is completely fooled by a deepfake, your infrastructure refuses to let the catastrophic action occur.

Conclusion: Bulletproofing Your Application Against Human Error

The era of AI-generated fraud is here, and it is moving faster than ever. Relying purely on human intuition to spot AI anomalies is a losing battle, and traditional training frameworks are completely useless against a perfect deepfake. To survive this new threat landscape, modern teams must shift from human-based detection to cryptographic and technical verification.

Because an attacker always needs your application to actually execute the payload, your infrastructure must be designed to implement systems that assume identity can be easily spoofed. If your internal applications are misconfigured, lack strict role-based access controls (RBAC), or contain logic flaws, the social engineering attack succeeds effortlessly.

You need to ensure your application's armor is thick enough to withstand human error. Axeploit actively tests your live, running applications by safely attacking your APIs and internal dashboards from the outside, exactly how a malicious hacker would. We show you exactly how to lock down your business logic so that even if an employee is completely fooled by a deepfake, your infrastructure refuses to let the catastrophic action occur.