If you wanted to design a system that maximized the blast radius of a single compromise, you would probably build something very much like a modern CI/CD pipeline. It has read access to source code, write access to artifact registries, deploy permissions to production infrastructure, and credentials for cloud APIs, package managers, databases, and third-party services. It runs attacker-controllable code (your build scripts) with these permissions by default. And it logs its activity in a format optimized for debugging build failures, not for security forensics.

This is not an exaggeration for rhetorical effect. It is a description of the default configuration of GitHub Actions, GitLab CI, CircleCI, Jenkins, and every other major CI/CD platform. The question is not whether these systems are high-value targets. The question is why we were surprised when they started getting compromised.

The Trust Inversion

Traditional security architecture assumes a hierarchy of trust. Production systems are high-trust and heavily monitored. Development systems are lower-trust and more permissive. Build systems sit somewhere in the middle , they are "just infrastructure."

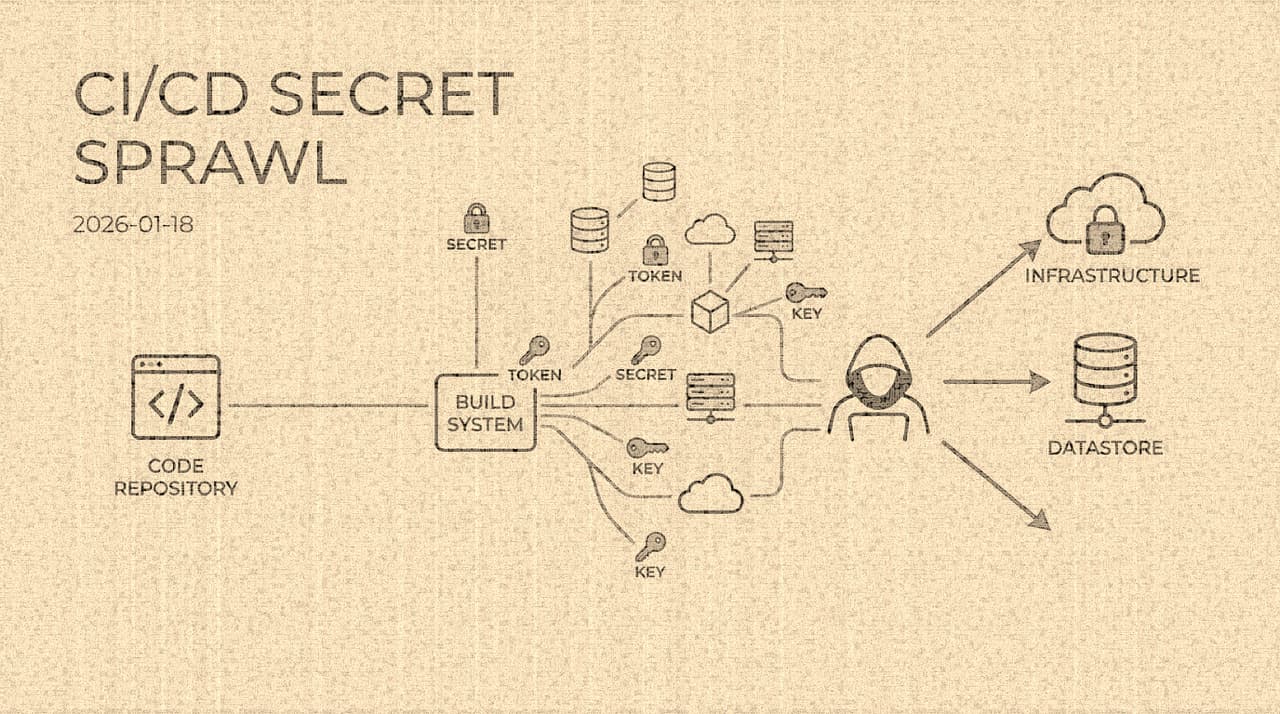

But CI/CD pipelines invert this hierarchy. A build pipeline is the only system in most organizations that simultaneously has access to source code (development), deployment credentials (operations), signing keys (release), and cloud APIs (infrastructure). It is, in terms of raw privilege, the most powerful system in the organization. Yet it is typically managed by a platform team whose primary concern is build speed and developer experience, not security posture.

The result is that the system with the highest privilege concentration receives the lowest security attention. This is the trust inversion, and it is the structural reason why CI/CD compromise is so devastating.

Three Breaches That Proved the Point

Codecov (April 2021). Codecov provided a Bash uploader script that CI pipelines would curl and execute to submit code coverage reports. An attacker modified this script in Codecov's infrastructure to exfiltrate environment variables from every CI job that ran it. For approximately two months, every CI secret , API tokens, AWS credentials, signing keys , of every Codecov customer was silently collected. The attack was not sophisticated: it was a one-line modification to a shell script. But because that script ran inside CI environments with access to pipeline secrets, the one line was enough. Twitch, HashiCorp, and an estimated 29,000 other organizations were affected. The breach demonstrated that the security of your CI pipeline depends on the security of every tool your pipeline curls from the internet.

SolarWinds / SUNBURST (December 2020). The SolarWinds compromise was a build-system attack at nation-state scale. Attackers infiltrated SolarWinds' build infrastructure and injected a backdoor (SUNBURST) into the Orion software build process. The malicious code was compiled into signed, official update packages and distributed to approximately 18,000 organizations, including multiple U.S. government agencies. The key insight was not the backdoor itself , that was technically unremarkable , but the choice of attack surface. By compromising the build process rather than the source code repository (where code review might have caught the change), the attackers ensured that the malicious code was never visible in version control, only in the compiled output. The build system was a blind spot in SolarWinds' security model.

CircleCI (January 2023). An attacker compromised a CircleCI engineer's laptop, stole a session token that provided access to CircleCI's internal systems, and used that access to exfiltrate customer environment variables and secrets stored in CircleCI's platform. CircleCI disclosed that customers should rotate every secret stored in the platform. The scope was staggering , CircleCI processes billions of builds annually for thousands of organizations, and each of those organizations stores deployment credentials, API tokens, and signing keys in CircleCI's environment variable store. The breach illustrated that when you store secrets in a third-party CI platform, you are extending your trust boundary to include that platform's entire internal security posture: their employee laptops, their session management, their access controls, their monitoring.

These three breaches share a common structure: the attacker did not need to compromise the target organization directly. They compromised the CI infrastructure , the build script, the build server, the CI platform , and inherited the target's own credentials.

Why Secret Sprawl Is Architectural, Not Hygienic

The standard advice about CI/CD secrets goes something like: "Don't hardcode secrets. Use a secrets manager. Rotate regularly." This is fine as hygiene guidance, but it fundamentally misdiagnoses the problem.

Secret sprawl in CI/CD is not caused by careless developers putting passwords in code. It is caused by the architecture of modern software delivery. A typical deployment pipeline requires credentials for:

- Source code repositories (to pull code)

- Package registries (to pull dependencies and push artifacts)

- Container registries (to push and pull images)

- Cloud providers (to provision infrastructure and deploy)

- Database systems (to run migrations)

- Monitoring services (to register deployments)

- Third-party APIs (for integration testing)

- Signing infrastructure (to sign artifacts and commits)

- Notification services (to report build status)

Each of these requires a credential. Each credential has a scope, a lifetime, and an access pattern. In the ideal world, every credential would be short-lived, narrowly scoped, and never stored at rest. In the real world, many of these credentials are long-lived tokens stored as environment variables because that is what the tool's documentation recommended, and nobody has had time to migrate to a better pattern.

The proliferation is not carelessness. It is the natural consequence of a deployment pipeline that interacts with a dozen systems, each of which has its own authentication model, its own credential format, and its own concept of least privilege. The "secret sprawl" is isomorphic to the system integration complexity.

The Exfiltration Surface

Secrets stored in CI environments can be exfiltrated through channels that are difficult to monitor because they overlap with normal build behavior:

The problem compounds: CI runners are typically ephemeral containers or VMs with minimal monitoring. They are designed for performance and reproducibility, not observability. Security teams that invest heavily in production monitoring often have zero visibility into what happens inside a CI job. The runner spins up, runs attacker-controllable code with elevated privileges, and tears down. If the attacker exfiltrates secrets during that window, the evidence is often gone with the runner.

What Actually Reduces Risk

Having worked through several CI/CD-related incidents, I have become skeptical of solutions that add controls without changing the fundamental architecture. You cannot make a system secure by adding monitoring to a fundamentally over-privileged design. The leverage is in reducing the privilege, not in watching it more carefully.

Workload identity federation (OIDC). The most impactful architectural change available today is replacing stored secrets with federated identity. GitHub Actions, GitLab CI, and CircleCI all now support OIDC token issuance: the CI job receives a short-lived, audience-bound JWT that can be exchanged for cloud provider credentials via federation. The cloud provider validates the JWT's claims (repository, branch, workflow, environment) and issues short-lived credentials with permissions scoped to those claims.

This eliminates the stored secret entirely. There is no long-lived token in an environment variable. The credential exists only for the duration of the job, is bound to the specific workload, and cannot be replayed from a different context. If the Codecov breach had happened in a world where every customer used OIDC federation instead of stored AWS keys, the exfiltrated environment variables would have contained a JWT that was already expired and audience-bound to Codecov, not to the customer's AWS account.

OIDC federation is not a panacea , it requires the cloud provider to support it, the claims in the JWT to be granular enough for meaningful policy decisions, and the pipeline to be architected so that each job requests only the permissions it needs. But it eliminates the largest single category of CI/CD secret sprawl: static cloud credentials.

Job-level permission boundaries. Build, test, package, and deploy should not share a single identity. Each stage should have its own credential set with the minimum permissions required for that stage. A test job needs read access to the code and maybe a test database. It does not need write access to the production container registry or deploy permissions to the production cluster. If your CI platform does not support per-job identity scoping, that is a platform limitation worth migrating away from.

Workflow-as-code review. Changes to CI configuration files (.github/workflows/*.yml, .gitlab-ci.yml, Jenkinsfile) should be treated with the same security scrutiny as changes to authentication code. A modified workflow can exfiltrate every secret the pipeline has access to. In organizations that require code review for application changes but allow CI config changes to merge with a single approval (or no approval), the workflow file is the path of least resistance for an insider or compromised account.

Egress controls. CI runners should have explicit network egress policies. A build job that needs to pull from npm and push to ECR does not need to be able to reach arbitrary internet hosts. Egress filtering in CI is rare because it interferes with build flexibility , developers want to curl things, pip install things, fetch things. But uncontrolled egress is exactly how exfiltration happens. The compromise is to maintain allowlists of approved registries and block everything else, with an exception process for legitimate new destinations.

An Honest Assessment of Residual Risk

Even with OIDC federation, per-job scoping, workflow review, and egress controls, CI/CD remains a high-risk surface. The fundamental reason is that build pipelines execute attacker-controllable code. Every npm install, every pip install, every docker build is an execution of third-party code inside your trust boundary. Supply chain attacks that modify dependencies (like the ua-parser-js compromise in October 2021 or the event-stream attack in November 2018) run inside CI with whatever permissions the CI job has, regardless of how well you manage your own secrets.

The honest conclusion is not that CI/CD security is a solved problem with the right tooling. It is that CI/CD is inherently high-risk because it combines broad privilege with third-party code execution, and the best you can do is minimize the privilege, minimize the stored credentials, maximize the observability, and accept that this surface will remain a primary target for sophisticated attackers.

The organizations that survive CI/CD compromise with limited damage are not the ones with the best prevention controls. They are the ones who assumed compromise would happen and designed their privilege architecture so that a compromised build job cannot reach production data, cannot modify other pipelines, and cannot persist beyond the life of the ephemeral runner. The question is not "how do we keep attackers out of CI?" but "what is the worst thing that can happen when they get in?"